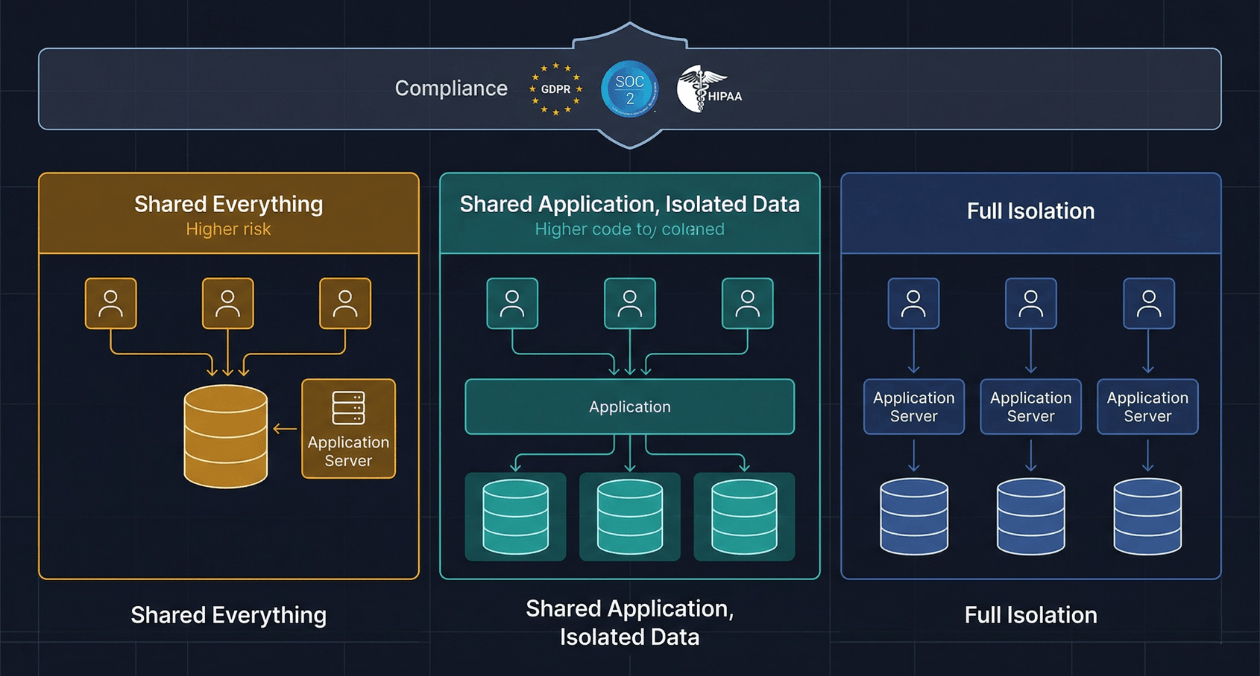

Every SaaS product eventually faces the same architectural inflection point. The first version was built for a handful of customers. Data lived in a shared database, the application ran as a single instance, and the team knew which customer owned which row because they could remember it. Then the customer count doubled, then tripled, and […]

Read more

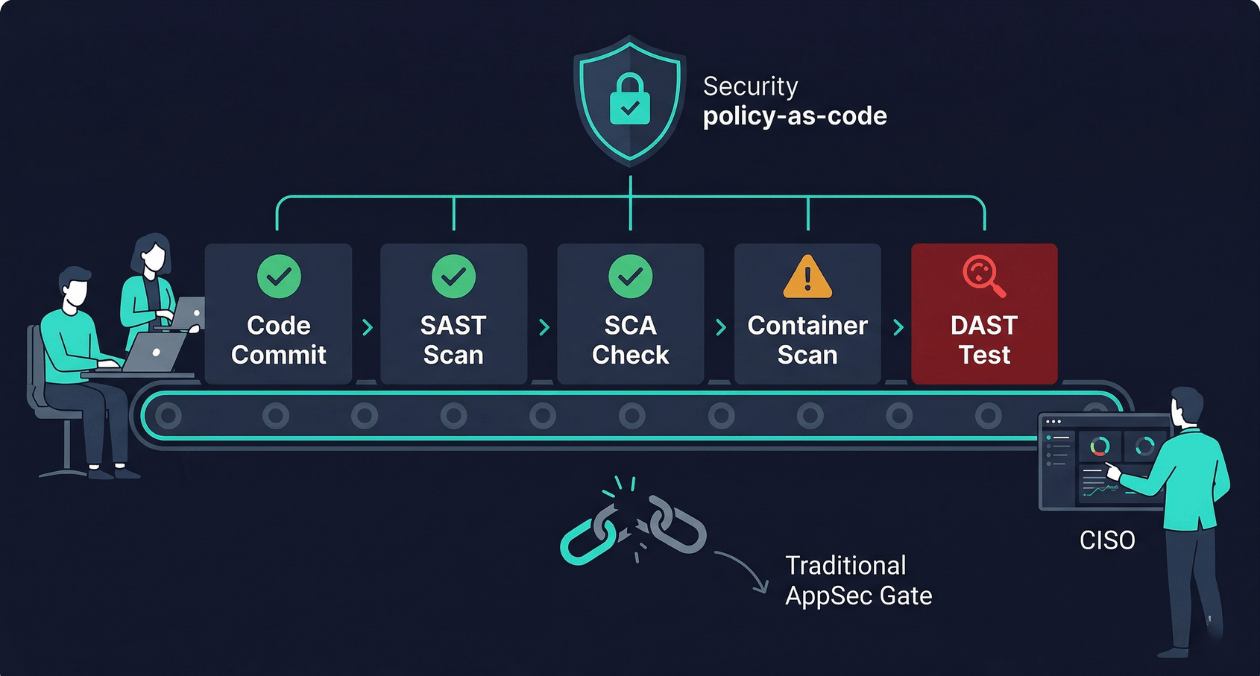

There is a particular kind of friction that security teams and engineering teams share without ever quite resolving. Engineering wants to ship fast. Security wants to ship safely. When security operates as a gate at the end of the release process, it becomes a bottleneck: a queue of features waiting for a penetration tester’s review, […]

Read more

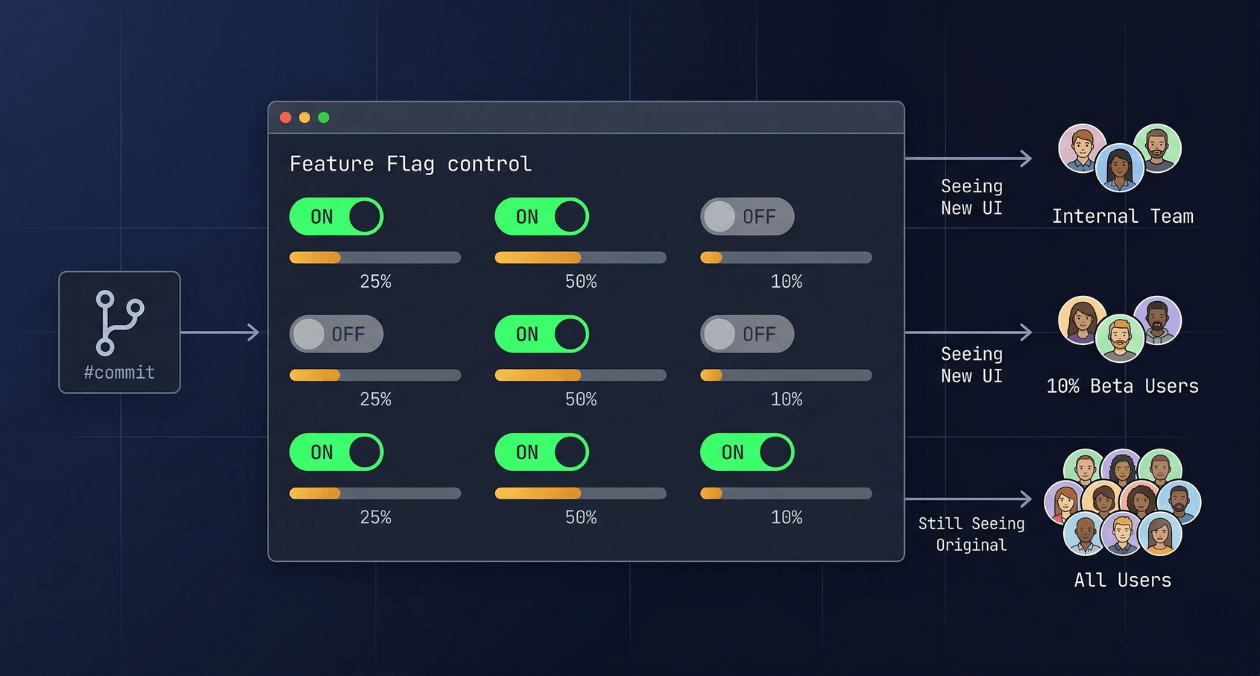

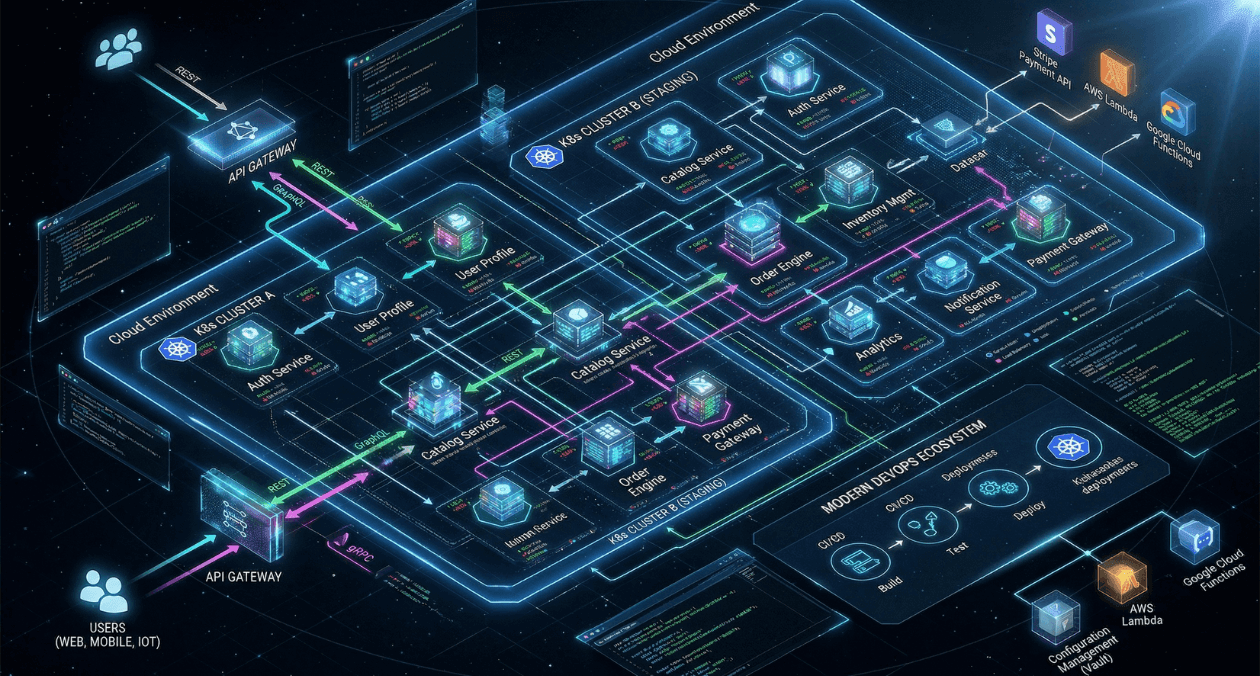

The deploy button used to mean something definitive. You shipped code, users got the new version, and if something broke you scrambled to roll back. That model works fine when a team ships once a month. It becomes increasingly untenable when you are deploying multiple times a day across a distributed system where a rollback […]

Read more

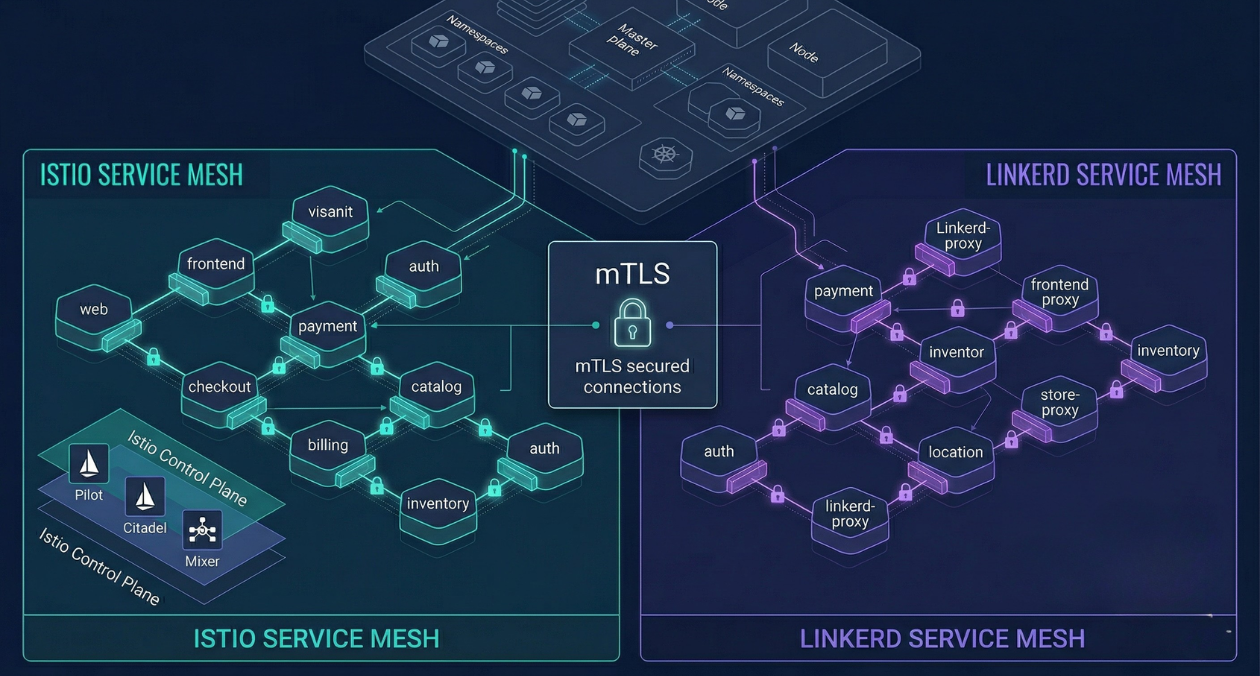

There is a specific moment in the growth of a microservices platform when the operational questions start arriving faster than the answers. How do you know which service is responsible for the latency spike you saw at 2 am? How do you enforce that service A never talks directly to service B without going through […]

Read more

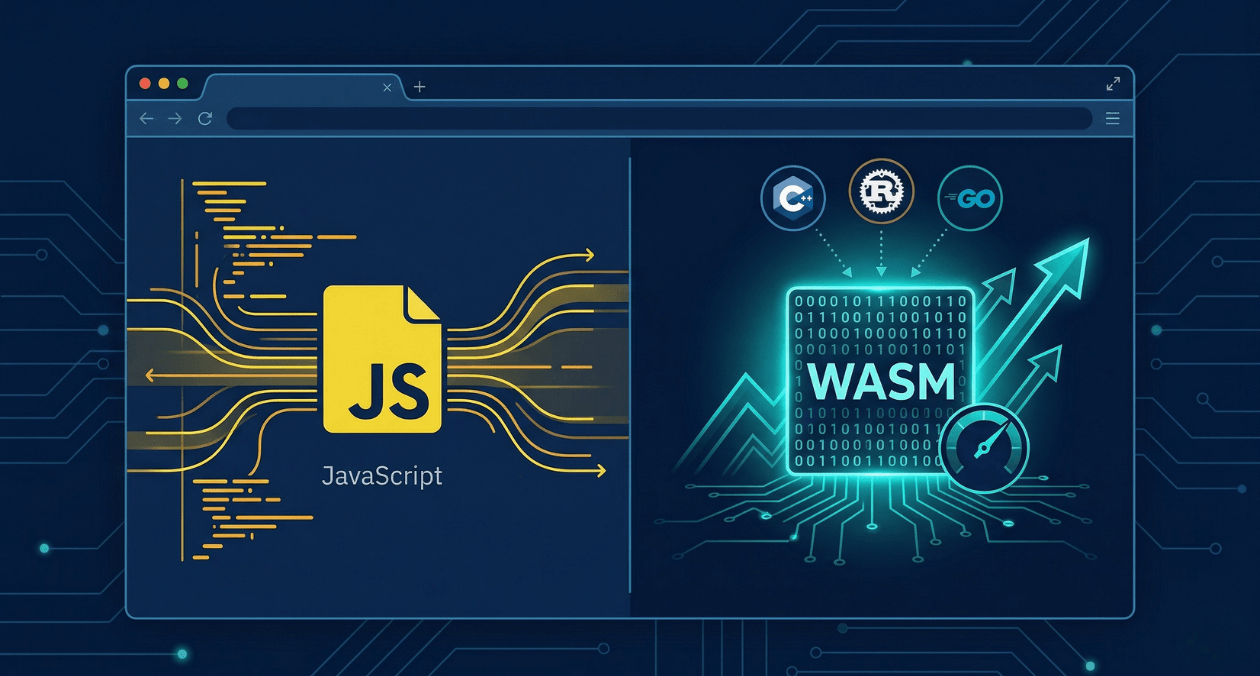

WebAssembly has been in the conversation for nearly a decade, but 2026 is the year more engineering teams are moving it from experimental to production-grade. It is no longer just a curiosity for game developers running Unreal Engine in a browser. Platform teams are using it to run compute-intensive workloads at near-native speed on the […]

Read more

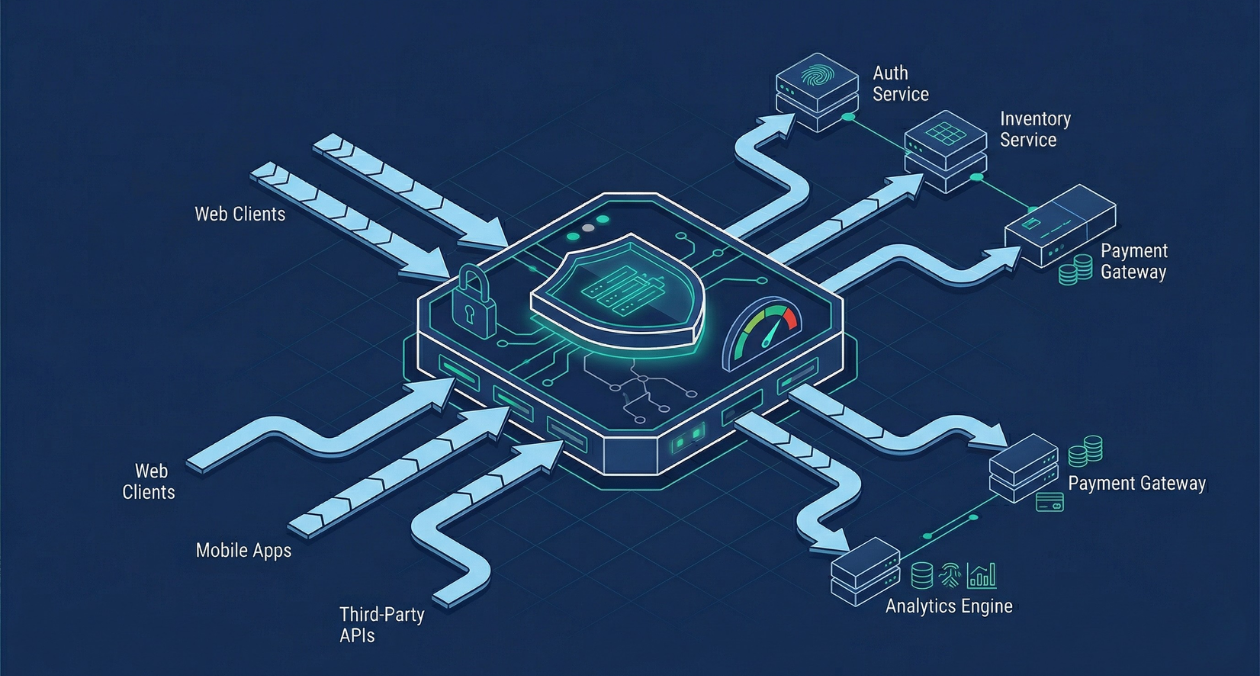

An API gateway is where the theoretical neatness of a microservices architecture meets the reality of production traffic. It is the single entry point through which every client request passes before reaching a backend service, which means it carries a significant amount of responsibility: authentication, authorization, rate limiting, load balancing, request routing, logging, and more. […]

Read more

Choosing an API style is one of those decisions that compounds over time. Pick the wrong one and you end up fighting the framework every time the system grows. Pick the right one and the architecture scales cleanly as requirements change. In 2026, most microservices teams are working with at least two of these three: […]

Read more

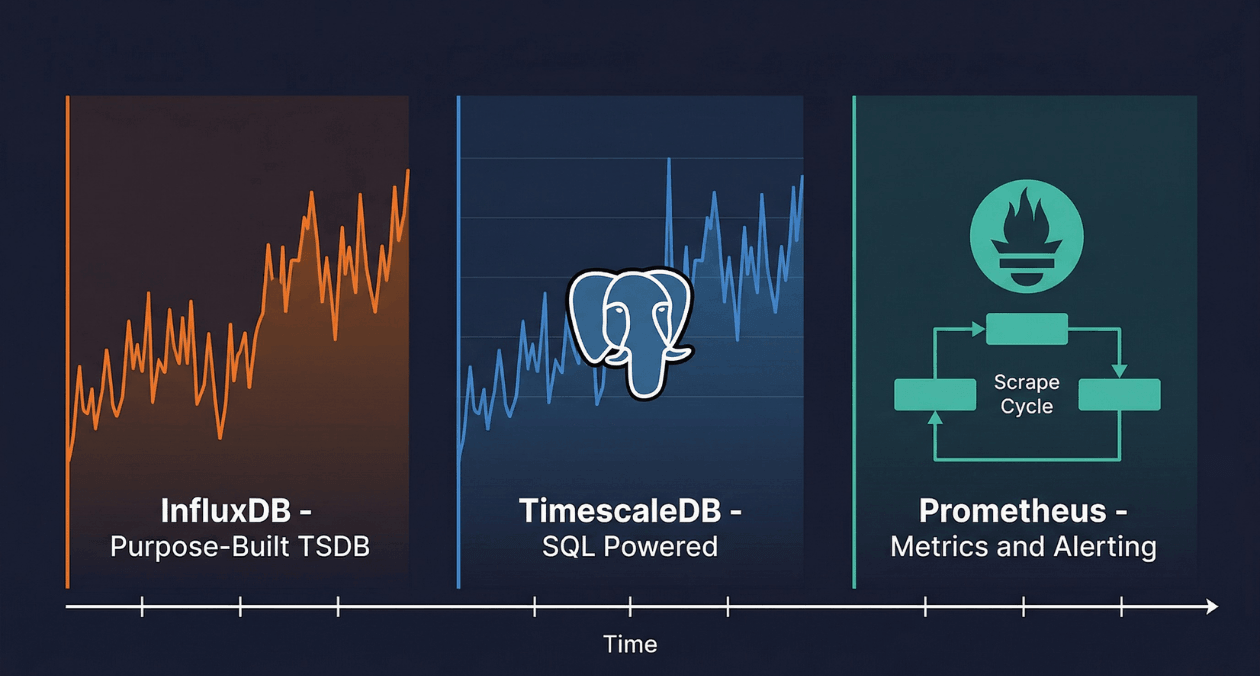

A temperature sensor on a factory floor records a reading every five seconds. A Kubernetes cluster emits thousands of metrics per minute from every pod, node, and container. A financial trading platform logs every price tick across hundreds of instruments simultaneously. Each of these workloads shares a common structure: a continuous stream of timestamped numerical […]

Read more

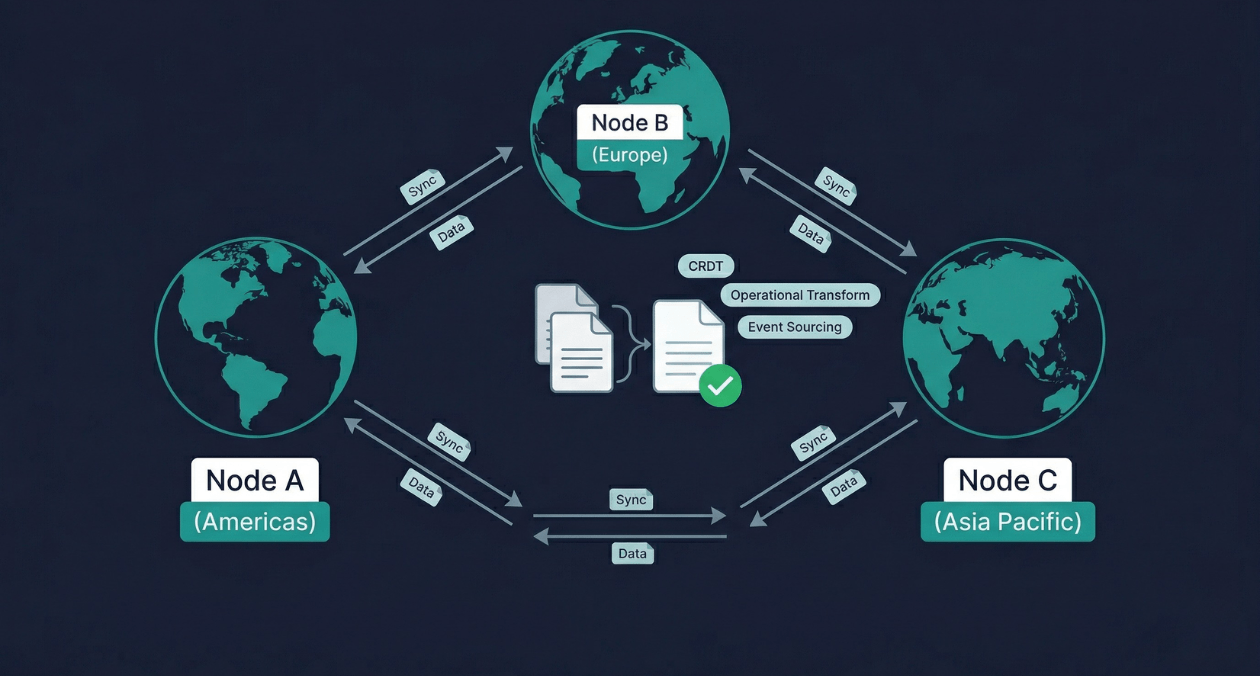

Two users open the same document from different continents. One adds a sentence at the top. The other deletes a paragraph in the middle. Both are offline for thirty seconds. When their connections restore, the application must merge both changes into a single coherent document without losing either user’s work and without asking either of […]

Read more

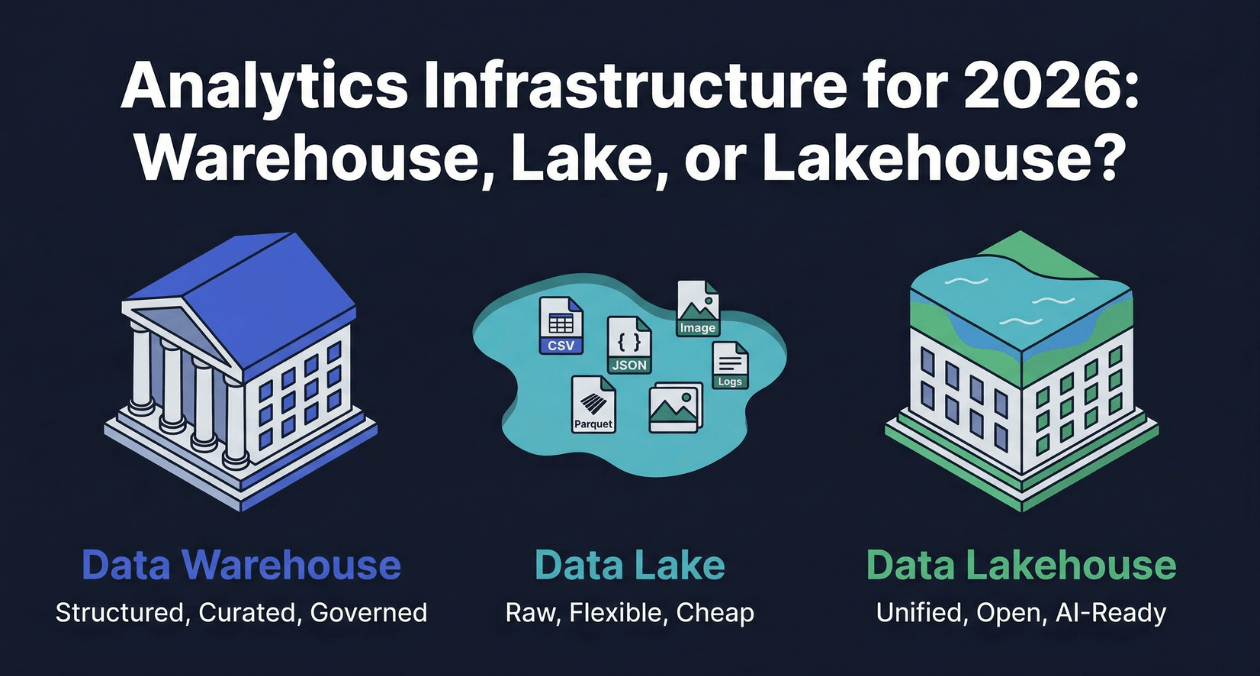

Every organization that generates data eventually faces a version of the same question: where should that data live so that the people and systems that need it can access it reliably, quickly, and without paying engineers to move it around constantly? The answer has changed significantly over the past fifteen years, and in 2026 it […]

Read more