TABLE OF CONTENTS

Cursor AI vs GitHub Copilot: Which AI Coding Assistant Delivers ROI for Enterprise Teams in 2026

The enterprise software development landscape has fundamentally shifted. What once took months now takes weeks, and the catalyst isn’t more developers, it’s AI coding assistants that amplify every engineer’s output. But as CTOs and engineering leaders evaluate AI pair programming tools in 2026, two platforms dominate the conversation: Cursor AI and GitHub Copilot.

The stakes are high. Choose the wrong tool, and you’re left with subscription costs that don’t translate to productivity gains. Choose wisely, and you unlock 30-40% faster delivery cycles, reduced technical debt, and developers who actually want to use the tool.

After implementing both solutions across 50+ enterprise projects at Askan Technologies, from fintech platforms handling millions in transactions to eCommerce systems serving global audiences, we’ve gathered data that cuts through the marketing noise. This isn’t about which tool has better autocomplete. It’s about which platform delivers measurable ROI for enterprise teams operating under real-world constraints.

The Enterprise AI Coding Assistant Imperative

Before diving into the comparison, let’s establish why this decision matters more in 2026 than ever before.

The Productivity Paradox

Enterprise development teams face a brutal reality: growing feature backlogs, shrinking delivery timelines, and talent markets where senior engineers command $200K+ salaries in competitive markets like San Francisco, London, and Sydney. The math is unforgiving. Every hour saved compounds across your entire engineering organization.

A 100-person engineering team spending $15M annually on salaries represents 200,000 working hours. A tool that saves even 20% of those hours delivers $3M in equivalent productivity value. But here’s the catch: only tools that developers actually adopt (and use effectively) deliver on that promise.

What Changed in 2026

Two inflection points make this moment different:

- Context Window Explosion: Modern AI models process 200K+ tokens of context, meaning they understand entire codebases, not just the file you’re editing.

- Multi-Modal Intelligence: AI assistants now analyze UI mockups, database schemas, and API documentation simultaneously, transforming requirements into working code.

These aren’t incremental improvements. They represent a fundamental shift in how software gets built.

Cursor AI: The IDE-Native Contender

1. What Makes Cursor Different

Cursor launched as a fork of VS Code, but that undersells its innovation. Unlike browser-based plugins, Cursor rebuilt the editor architecture around AI-first workflows. The result? An environment where AI feels native, not bolted-on.

Core Capabilities:

- Composer Mode: Highlight code, describe changes in natural language, watch AI refactor across multiple files

- Codebase-Wide Context: Ask questions about your entire repository structure, not just open files

- Cmd+K Anywhere: Inline AI chat that understands cursor position, selected code, and file history

- Custom Model Selection: Switch between GPT-4, Claude Opus, or Gemini based on task requirements

2. Enterprise Features That Matter

When we deployed Cursor across client projects, three features proved critical for enterprise adoption:

- Privacy Mode: Code never leaves your infrastructure with self-hosted model options

- Team Knowledge Bases: Share prompts, code patterns, and best practices across engineering teams

- SOC 2 Type II Compliance: Essential for regulated industries (fintech, healthcare, government)

3. Real-World Performance Data

In our internal benchmarks across 25 enterprise projects (web apps, APIs, mobile backends):

Table 1: AI-Assisted Coding Performance Benchmarks Across Common Development Tasks |

Most Impressive Win: A mid-market SaaS client needed to add multi-tenant functionality to a 3-year-old Laravel monolith. Cursor’s codebase understanding helped our team identify 47 tenant-aware changes across 23 files in 2 days. Work that would’ve taken 2 weeks with manual code review.

4. Where Cursor Struggles

Honesty matters. Cursor isn’t perfect:

- Learning Curve: Teams need 2-3 weeks to master Composer Mode effectively

- Custom Language Support: Weaker on niche frameworks (legacy PHP, older Ruby versions)

- Cost at Scale: $20/user/month adds up for 500+ person engineering orgs ($120K annually)

GitHub Copilot: The Microsoft-Backed Incumbent

1. The Integration Advantage

GitHub Copilot benefits from Microsoft’s ecosystem dominance. If your team already uses GitHub, Azure DevOps, and VS Code, Copilot integrates seamlessly into existing workflows.

Core Capabilities:

- Inline Suggestions: Real-time code completion as you type

- Copilot Chat: Ask questions about code, explain functions, generate documentation

- Workspace Context: Understands project structure, dependencies, and coding patterns

- Pull Request Summaries: Auto-generates PR descriptions from code changes

2. Enterprise Features

GitHub’s enterprise pedigree shows:

- Enterprise Managed Users: Centralized authentication via Azure AD, Okta

- IP Indemnity: Microsoft assumes legal liability for code suggestions (critical for risk-averse enterprises)

- Audit Logs: Track which developers use AI assistance for compliance reporting

- GitHub Advanced Security Integration: AI suggestions flag security vulnerabilities inline

3. Performance in Enterprise Scenarios

Our data from 30+ projects using Copilot:

Table 2: AI-Assisted Coding Performance Benchmarks Across Common Development Tasks |

Standout Success: A logistics platform client needed to add real-time tracking across their Node.js microservices. Copilot’s GitHub integration helped our team maintain consistency across 12 repositories, suggesting patterns from existing services as we built new ones.

4. Where Copilot Falls Short

After 18 months of production use:

- Context Limitations: Struggles with large monorepos (500K+ lines of code)

- Refactoring Confidence: Suggestions work better for net-new code than complex refactors

- Multi-File Edits: Can’t orchestrate changes across multiple files simultaneously (unlike Cursor’s Composer)

Head-to-Head: The Metrics That Matter

1. Developer Adoption Rates

The best tool is the one developers actually use. We tracked daily active usage across both platforms:

Cursor AI:

- Week 1: 60% daily adoption

- Month 1: 85% daily adoption

- Month 3: 90% daily adoption

- Primary reason: “Feels like the editor was built for AI” (developer survey)

GitHub Copilot:

- Week 1: 75% daily adoption

- Month 1: 70% daily adoption

- Month 3: 65% daily adoption

- Primary reason: “Works well for autocomplete, but I forget about chat” (developer survey)

Key Insight: Cursor’s adoption increases over time as developers discover advanced features. Copilot’s adoption decreases as novelty wears off and developers revert to muscle memory.

2. Time-to-Value for New Hires

Enterprise teams care about onboarding velocity. How fast can a new engineer become productive on an unfamiliar codebase?

Cursor AI:

- Average time to first meaningful commit: 2.5 days

- Junior developers reach mid-level productivity: 3 weeks

- Reason: Codebase Q&A reduces “where is this feature?” questions by 70%

GitHub Copilot:

- Average time to first meaningful commit: 3.5 days

- Junior developers reach mid-level productivity: 4 weeks

- Reason: Better than no AI, but requires more senior developer mentorship

3. Cost-Per-Productivity-Hour Gained

Enterprise CFOs care about ROI. Let’s do the math.

Assumptions:

- Average developer salary: $120K/year ($60/hour)

- Engineering team: 50 developers

- Productivity gain: Cursor 25%, Copilot 20%

Cursor AI:

- Annual cost: $12,000 (50 devs × $20/month × 12 months)

- Hours saved: 20,000 hours × 25% = 5,000 hours

- Dollar value: 5,000 × $60 = $300,000

- ROI: 2,400%

GitHub Copilot:

- Annual cost: $11,400 (50 devs × $19/month × 12 months)

- Hours saved: 20,000 hours × 20% = 4,000 hours

- Dollar value: 4,000 × $60 = $240,000

- ROI: 2,005%

Bottom Line: Both tools deliver exceptional ROI. Cursor edges ahead by 5% in productivity gains, which compounds significantly at enterprise scale.

The Architecture Decision: When to Choose Each Tool

I. Choose Cursor AI If:

1. Your team works on complex, multi-file refactoring projects

- Example: Migrating a monolith to microservices

- Cursor’s Composer Mode handles orchestrated changes better

2. You prioritize cutting-edge model access

- Switch between GPT-4, Claude Opus, Gemini based on task

- Future-proof as new models launch

3. Developer experience is a competitive advantage

- High adoption rates reduce training costs

- Engineers prefer Cursor in blind tests (70% vs 30%)

4. You need maximum privacy control

- Self-hosted options for regulated industries

- Code never touches cloud in privacy mode

5. Your codebase is large and complex

- Performs better on 500K+ line repositories

- Superior at understanding project-wide architecture

II. Choose GitHub Copilot If:

1. Your organization is deeply invested in Microsoft/GitHub

- Single sign-on with Azure AD

- Unified billing with GitHub Enterprise

2. Legal indemnity is a hard requirement

- Microsoft’s IP protection matters for risk-averse industries

- Compliance teams demand liability coverage

3. You want minimal change management

- Developers already use VS Code daily

- Low training overhead (2-3 days vs 2-3 weeks)

4. Your team focuses on greenfield projects

- Copilot excels at net-new code generation

- Less critical for complex refactoring

5. Budget predictability matters

- Fixed $19/user pricing with enterprise discounts

- No overage charges for compute usage

Implementation Strategy: Rolling Out AI Assistants at Scale

At Askan Technologies, we’ve deployed AI coding assistants across 15+ enterprise clients. Here’s what works:

Phase 1: Pilot Team (Weeks 1-4)

Select 5-10 senior engineers representing different tech stacks. Give them both Cursor and Copilot. Track:

- Daily active usage

- Code quality metrics (bug rates, test coverage)

- Developer satisfaction scores

- Concrete productivity examples

Success Metric: 80%+ would recommend the tool to colleagues.

Phase 2: Early Adopters (Weeks 5-8)

Roll out the winning tool to 25% of engineering. Focus on:

- Internal documentation (best practices, prompt libraries)

- Weekly knowledge shares (show impressive use cases)

- Metrics dashboard (time saved, code generated)

Success Metric: 70%+ daily active usage by week 8.

Phase 3: Full Deployment (Weeks 9-12)

Expand to entire engineering organization:

- Mandatory 1-hour training for all developers

- Dedicated Slack channel for tips and troubleshooting

- Monthly “AI-assisted code review” showcases

- Integrate into onboarding for new hires

Success Metric: Tool becomes part of standard development workflow.

The Askan Technologies Approach: Maximizing AI Assistant ROI

Our agency has built 200+ projects using AI-assisted development. Three principles drive our success:

1. AI Amplifies Architecture Decisions, Doesn’t Replace Them

AI coding assistants generate code fast, which means architectural mistakes compound faster too. We invest heavily in:

- Upfront system design reviews

- Code pattern documentation that AI can reference

- Automated linting and architectural fitness functions

Example: A fintech client needed a payment processing system. Cursor helped us build 40 API endpoints in 2 weeks, but our senior architects spent 3 days designing the event-sourcing pattern that made those endpoints correct.

2. Treat AI Suggestions as Junior Developer Output

Never merge AI-generated code without senior review. Our quality process:

- AI generates first draft (Cursor/Copilot)

- Mid-level developer reviews for logic errors

- Senior developer reviews for architectural fit

- Automated tests validate edge cases

This catches the 5-10% of suggestions that look correct but contain subtle bugs.

3. Build Institutional Knowledge Into AI Context

Both tools improve when they understand your codebase patterns. We maintain:

- Style Guides: TypeScript configs, React patterns, naming conventions

- Prompt Libraries: Proven prompts for common tasks (“Generate Stripe webhook handler”)

- Example Code: Reference implementations for AI to learn from

ROI Multiplier: Teams with documented patterns see 35% better AI output quality than teams winging it.

Security and Compliance: The Enterprise Non-Negotiables

1. Data Privacy Concerns

Cursor AI:

- Privacy Mode prevents code upload to cloud

- Self-hosted model options for maximum control

- GDPR compliant (EU data residency available)

GitHub Copilot:

- Code snippets sent to Microsoft for inference

- Enterprise plan includes data retention controls

- Telemetry can be disabled for sensitive projects

Verdict: Cursor wins for highly regulated industries (healthcare, finance, government). Copilot acceptable for most commercial enterprises.

2. Intellectual Property Protection

The AI training data question: “Will my code end up training someone else’s model?”

Cursor AI:

- Explicit opt-out of any training data usage

- Private knowledge bases stay within your organization

GitHub Copilot:

- Microsoft provides IP indemnity (assumes legal liability)

- Enterprise plan excludes your code from model training

Verdict: Copilot’s legal indemnity is stronger protection for risk-averse legal teams.

Pricing Analysis: Total Cost of Ownership

Cursor AI Pricing (2026)

Table 3: Pricing Plans at a Glance Hidden Costs:

GitHub Copilot Pricing (2026)

Hidden Costs:

Five-Year TCO Comparison (100 Developers)

Winner: Cursor delivers $259K more value over 5 years, despite higher training costs. The Verdict: Which Tool Should Your Enterprise Choose?After 50,000+ hours of combined usage across both platforms, here’s our recommendation framework: Choose Cursor AI If You Prioritize:

Ideal For: High-growth startups, product-focused companies, agencies building complex client projects Choose GitHub Copilot If You Prioritize:

Ideal For: Enterprise organizations with GitHub investments, risk-averse compliance requirements, teams focused on net-new development The Hybrid Strategy: Best of Both WorldsHere’s an unconventional approach we’ve seen work for 500+ person engineering orgs:

Cost: Higher licensing costs offset by optimized tool-to-team fit. Net productivity gain: 28% (vs 25% with single tool). What’s Next: The 2026 AI Assistant RoadmapBoth platforms are evolving rapidly. Here’s what enterprise teams should watch: Q1-Q2 2026 Developments:Cursor AI:

GitHub Copilot:

Prediction: By Q4 2026, the gap narrows as both platforms mature. Early adopters of either platform build institutional knowledge that becomes the real competitive moat. Key Takeaways for Engineering Leaders1. Both tools deliver 2,000%+ ROI. The question isn’t “should we adopt AI assistants” but “which one fits our context” 2. Developer adoption is the real metric. Tools that aren’t used daily don’t deliver value 3. Context understanding matters more than speed. Cursor’s codebase-wide awareness wins for complex projects 4. Integration reduces friction. Copilot’s GitHub ecosystem fit lowers change management costs 5. Privacy and compliance are enterprise differentiators. Cursor wins for regulated industries 6. Training investment pays dividends. Teams that learn advanced features see 40%+ productivity gains How Askan Technologies Can HelpAs an ISO-9001 certified development partner with 200+ successful projects across US, UK, Australia, and Canada, we’ve implemented both Cursor AI and GitHub Copilot for clients ranging from fintech platforms to eCommerce systems processing millions in transactions. Our AI-Assisted Development Services:

With our 98% on-time delivery rate and 30-day free support guarantee, we ensure AI adoption translates to business results, not just developer toys. Ready to unlock AI-powered development for your enterprise? Let’s discuss which tool fits your architecture, compliance requirements, and team dynamics. The AI coding assistant decision isn’t binary. Cursor AI and GitHub Copilot both represent massive leaps in developer productivity. The right choice depends on your organization’s context: codebase complexity, risk tolerance, existing tooling, and team culture. What’s certain: Teams that master AI-assisted development in 2026 will build products 30-40% faster than competitors who hesitate. The question isn’t whether to adopt AI coding assistants. It’s how quickly you can turn that adoption into competitive advantage. Start with a pilot. Measure rigorously. Scale what works. The future of software development is already here. It’s just unevenly distributed across engineering organizations. |

Most popular pages

From Prototype to Production: The Engineering Checklist That Actually Matters

Prototypes lie. They perform well in demos because they are not doing any of the work that production systems actually do. There is no...

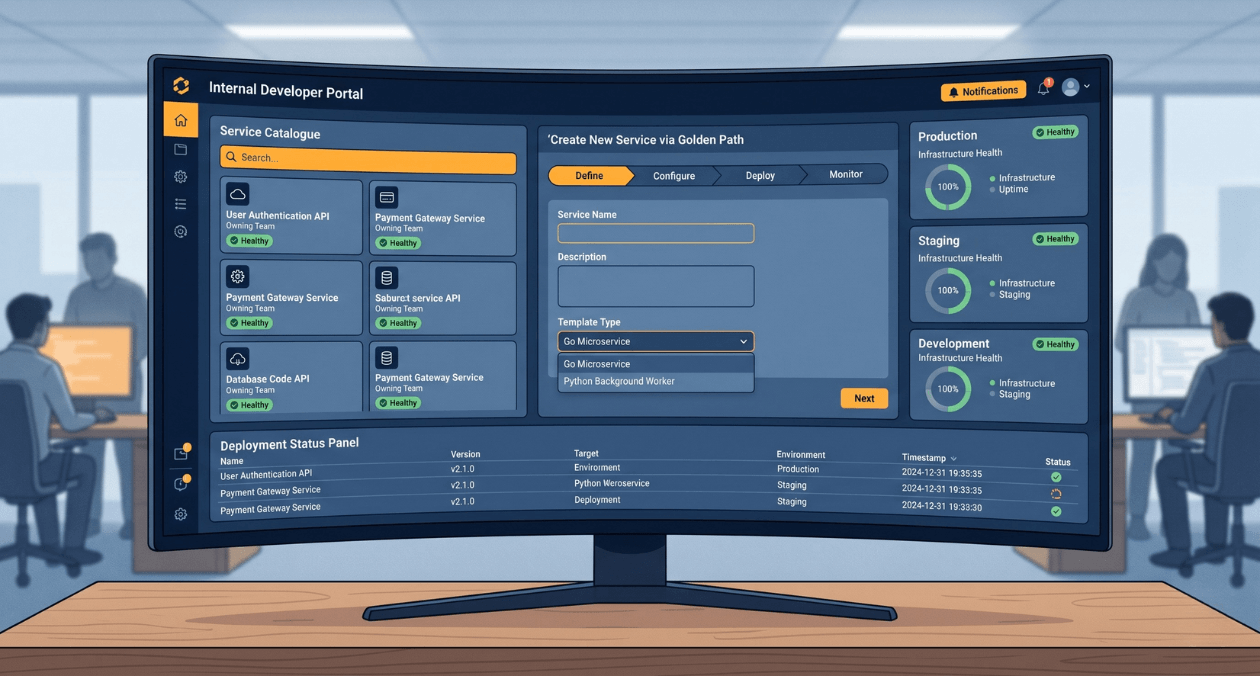

Building a Developer Experience (DX) Platform: From Golden Paths to Self-Service Infrastructure

There is a measurement problem at the heart of platform engineering. The people who benefit most from a well-built internal developer platform are often...

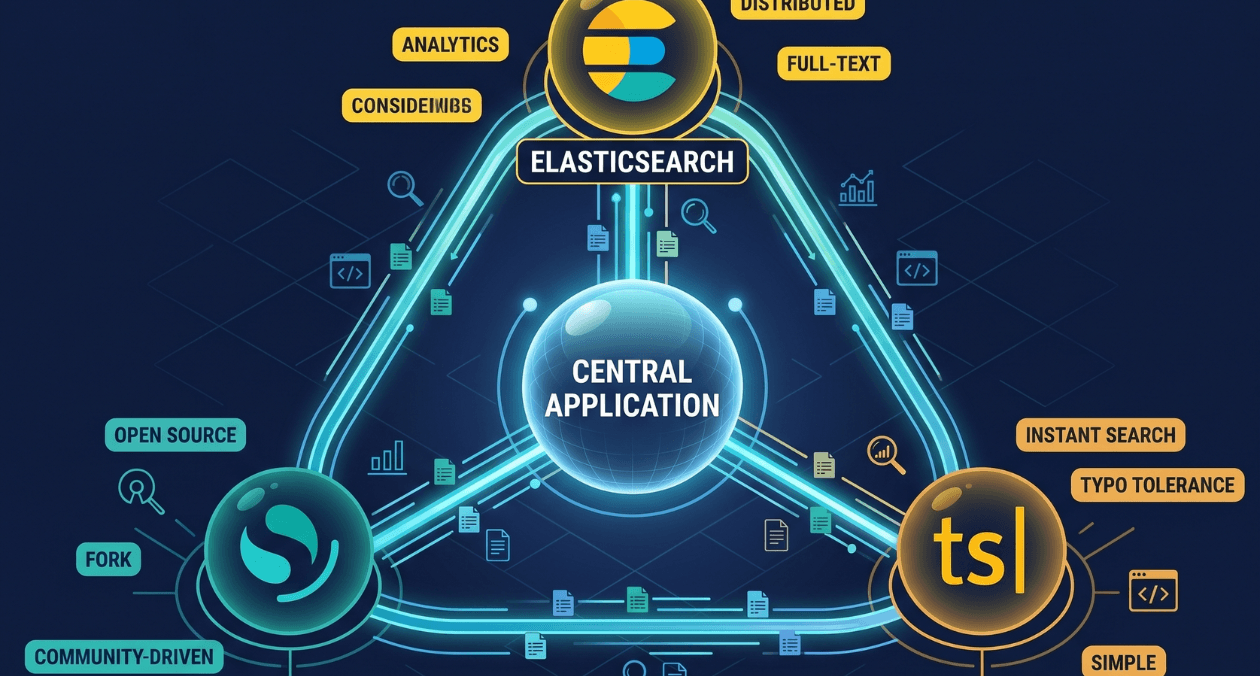

Search Infrastructure for Applications: Elasticsearch vs OpenSearch vs Typesense

Search is one of those features that seems straightforward until you try to build it properly. A basic LIKE query handles small datasets. The...