TABLE OF CONTENTS

API Gateway Patterns: Rate Limiting, Authentication, and Traffic Management at Scale

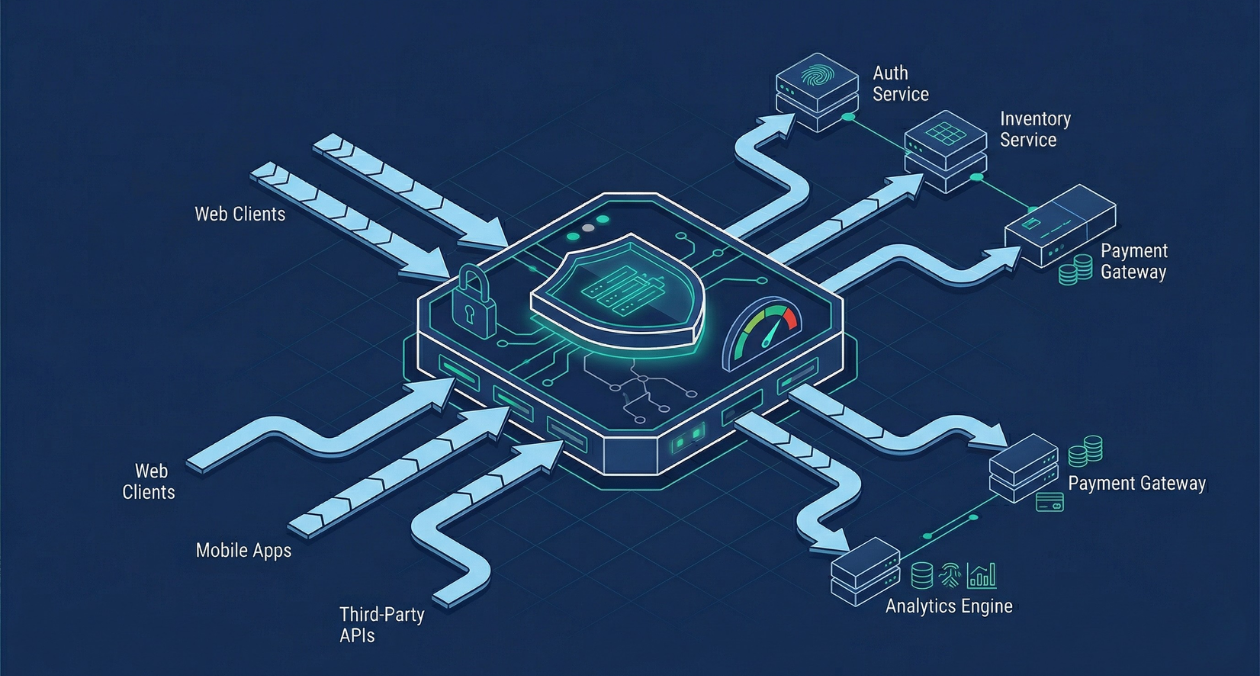

An API gateway is where the theoretical neatness of a microservices architecture meets the reality of production traffic. It is the single entry point through which every client request passes before reaching a backend service, which means it carries a significant amount of responsibility: authentication, authorization, rate limiting, load balancing, request routing, logging, and more.

Done well, the gateway becomes a force multiplier. It enforces policies consistently across every service without requiring each team to implement the same logic independently. Done poorly, it becomes a bottleneck that limits throughput and a single point of failure that takes down the entire system when it struggles.

This guide covers the patterns and implementation decisions that separate a well-designed API gateway from one that creates problems at scale. It is written for backend architects, DevOps engineers, and security engineers who are either building a gateway from scratch or evaluating how to improve an existing one.

What an API Gateway Actually Does

Before getting into patterns, it is worth being precise about scope. An API gateway handles concerns that are cross-cutting across services. These are things every service would need to implement on its own if the gateway did not handle them centrally.

The core responsibilities fall into three categories. Traffic management covers routing, load balancing, circuit breaking, and retries. Security covers authentication, authorization, SSL termination, and input validation. Observability covers request logging, distributed tracing injection, and metrics collection.

Some teams also use the gateway for request and response transformation, API versioning, and developer portal management. These are valid use cases, but they should be evaluated carefully. The more logic the gateway contains, the heavier the operational burden of maintaining it becomes.

A useful mental model: the gateway should handle concerns that are about the request itself, not concerns that are about the business logic of what the request is asking for. Business logic belongs in the services.

Rate Limiting: Patterns That Actually Hold Under Load

Why Rate Limiting Is Harder Than It Looks

The concept of rate limiting is simple. Restrict how many requests a client can make within a time window. The implementation at scale is not.

The first challenge is state. Rate limit counters need to be checked and incremented atomically on every request. In a single-instance deployment this is trivial. In a distributed gateway with multiple instances behind a load balancer, counters must be shared across instances, which means a centralized store like Redis becomes a dependency on the hot path.

The second challenge is granularity. Rate limits can be applied at multiple levels: per IP address, per API key, per user account, per endpoint, or per tier of service plan. A well-designed rate limiting system supports all of these simultaneously, because a single abusive user and a DDoS from thousands of IPs require different responses.

Token Bucket vs Sliding Window

Two algorithms dominate production rate limiting implementations.

The token bucket algorithm maintains a bucket of tokens for each client. Tokens are added at a fixed rate up to a maximum. Each request consumes one token. When the bucket is empty, requests are rejected. This allows short bursts above the average rate, which is the right behavior for most legitimate API consumers who occasionally batch requests.

The sliding window algorithm tracks all requests within a rolling time window. It prevents bursts more strictly than token bucket but is more expensive to compute because it requires storing individual request timestamps rather than a single counter. Redis sorted sets are a common implementation substrate for sliding windows.

For most public APIs, token bucket with a per-key and per-IP limit provides the right balance of burst tolerance and abuse prevention. Sliding window is better suited for billing-critical APIs where exact per-period consumption must be enforced.

Planning or Scaling Your API Gateway Infrastructure? Askan Technologies Delivers End-to-End Backend Architecture

Authentication and Authorization at the Gateway

SSL Termination

SSL termination at the gateway is standard practice. The gateway decrypts inbound HTTPS traffic and forwards requests to backend services over HTTP within the private network. This offloads cryptographic computation from individual services and centralizes certificate management.

For services that handle sensitive data and require end-to-end encryption even within the cluster, mutual TLS between the gateway and backend services is the correct approach. Service meshes like Istio handle mTLS transparently across services, but if you are running a gateway-only architecture without a service mesh, you need to manage certificates between the gateway and services explicitly.

JWT Validation

JSON Web Tokens are the standard authentication mechanism for API gateways in 2026. The gateway validates the JWT signature on every request using a public key from the identity provider. Valid tokens are forwarded to the backend with the decoded claims attached as request headers. Invalid tokens are rejected at the gateway before they reach any service.

This model means the backend services can trust the claims passed by the gateway without re-validating the token, which removes a redundant cryptographic operation on the hot path. The trade-off is that the gateway must have access to the public key and must handle key rotation without downtime.

Key rotation is handled cleanly by supporting multiple valid public keys simultaneously during the rotation window. Both the old and new key are accepted until all tokens signed by the old key have expired.

API Key Management

For machine-to-machine integrations and third-party access, API keys are simpler than JWTs and easier for external developers to use. The gateway stores a hash of each key (never the key itself) and validates inbound keys against the store.

API keys should be scoped. A key issued for read-only access to a specific resource set should not grant write access or access to other resources. Scope enforcement at the gateway prevents scope creep from becoming a security liability.

Authorization: What the Gateway Should and Should Not Handle

Authentication, confirming who the requester is, belongs at the gateway. Authorization, deciding what that requester is allowed to do, requires a more careful split.

Coarse-grained authorization, such as confirming that a given API key has access to a particular service or that a user’s token includes a required role, belongs at the gateway. Fine-grained authorization, such as determining whether a specific user can access a specific record, belongs in the service because it requires business context the gateway does not have.

Attempting to push fine-grained authorization logic into the gateway results in a gateway that becomes tightly coupled to business rules and difficult to maintain independently of the services it fronts.

| Mechanism | Best Used For | Gateway Responsibility |

|---|---|---|

| SSL/TLS termination | All external traffic | Decrypt and forward |

| JWT validation | User-facing APIs | Validate signature, forward claims |

| API key auth | Machine-to-machine, partners | Hash lookup, scope check |

| mTLS | Internal service auth | Certificate validation |

Traffic Management Patterns

Routing and Load Balancing

Request routing is the most fundamental gateway function. Incoming requests are matched against routing rules and forwarded to the appropriate backend service. Routing rules can be based on path prefix, host header, HTTP method, query parameters, or custom headers.

Load balancing distributes traffic across multiple instances of the same service. Round-robin is the default and handles homogeneous instances well. Weighted round-robin is useful during canary deployments when you want to send a percentage of traffic to a new version. Least-connections routing is better for services with variable response times where some requests are significantly more expensive than others.

Circuit Breaking

A circuit breaker prevents a failing backend service from being continuously hammered with requests it cannot handle. When the error rate for a service exceeds a threshold, the circuit opens and subsequent requests fail fast at the gateway instead of waiting for a timeout. After a configured interval, a test request is sent to check whether the service has recovered.

Circuit breakers are critical for preventing cascade failures in microservices architectures. When Service A depends on Service B, and Service B starts responding slowly, Service A’s thread pool fills with waiting requests and it too begins failing. The circuit breaker at the gateway stops this propagation before it reaches the client.

Kong, Nginx with Lua extensions, and Envoy all provide circuit breaking configuration. For teams running a managed gateway, AWS API Gateway supports circuit breaking through integration with CloudWatch alarms and Lambda authorizers.

Retries and Timeouts

Retry logic at the gateway should be applied selectively. Retrying GET requests on temporary failures is generally safe. Retrying POST or PUT requests risks duplicate operations unless the backend service implements idempotency keys.

Timeout configuration requires coordination with backend service SLAs. A gateway timeout that is shorter than the maximum processing time of a legitimate request will cause requests to fail that would have succeeded with more patience. A timeout that is too long holds connections open and reduces the gateway’s capacity to handle concurrent traffic. Start with p99 latency measurements from the backend service and set timeouts at two to three times that value.

Planning or Scaling Your API Gateway Infrastructure? Askan Technologies Delivers End-to-End Backend Architecture

Choosing a Gateway: Kong, Nginx, and Managed Options

The gateway tool choice shapes the operational model significantly. There is no universally correct answer, but there are clear patterns for different contexts.

Kong Gateway

Kong is built on top of Nginx and OpenResty and is the most widely deployed open-source API gateway in production microservices environments. Its plugin architecture allows rate limiting, authentication, logging, and transformation to be configured declaratively without writing custom code.

Kong’s declarative configuration via deck or the Kong Admin API integrates cleanly with GitOps workflows, which is important for teams that manage infrastructure as code. Kong also supports a Kubernetes-native deployment model through the Kong Ingress Controller, making it a natural fit for teams already running on Kubernetes.

The primary operational cost of Kong is the datastore dependency. Kong stores configuration in PostgreSQL or in a DB-less mode using declarative YAML. The DB-less mode reduces operational complexity but limits some dynamic configuration capabilities.

Nginx

Nginx is the foundation of many gateway implementations. Teams with Lua scripting experience can implement sophisticated routing, rate limiting, and authentication logic directly in Nginx configuration. For teams that need precise control over every aspect of gateway behavior and are willing to maintain that configuration, Nginx provides unmatched flexibility.

For most teams, however, the operational burden of a custom Nginx gateway exceeds the value. Kong or a managed gateway is a more maintainable starting point.

Managed Gateways

AWS API Gateway, Google Cloud Apigee, and Azure API Management each provide a fully managed gateway with built-in rate limiting, authentication, developer portal, and monitoring. The trade-off is reduced control and vendor lock-in. For teams that prioritize operational simplicity over fine-grained control, managed gateways significantly reduce the engineering effort required to maintain gateway infrastructure.

| Gateway Option | Best Fit |

|---|---|

| Kong (self-hosted) | Kubernetes-based systems, GitOps workflows |

| Nginx (custom) | Teams needing maximum configuration control |

| AWS API Gateway | AWS-native architectures, low ops overhead |

| Apigee | Enterprise API programs, developer portals |

Observability: What the Gateway Needs to Emit

A gateway that does not emit rich observability data is an opaque bottleneck. At minimum, every request passing through the gateway should produce a structured log entry and contribute to latency and error rate metrics per service and per endpoint.

- Structured request logs should include: timestamp, client identifier, target service, HTTP method, path, response status, response time, and bytes transferred

- Metrics should be exported to Prometheus or a compatible metrics store and should track request rate, error rate, and latency histograms per service and per route

- Distributed tracing headers (W3C TraceContext or B3) should be injected at the gateway for every request so that traces are complete from the client entry point through every downstream service

- Rate limit hit events should be logged separately with the client identifier and limit type so that abuse patterns can be analyzed without manually scanning access logs

Kong’s Prometheus plugin, Nginx’s built-in log format with Filebeat forwarding, and Envoy’s native stats emission all provide solid foundations for gateway observability. The important thing is consistency: every request goes through the gateway, so every request should be represented in your metrics and traces.

For teams building out their observability stack alongside their gateway, the monitoring architecture patterns covered in our piece on building observable systems with metrics, logs, and traces provide useful context for connecting gateway telemetry to a broader distributed tracing setup.

Scaling the Gateway Itself

The gateway sits on the critical path of every request in the system. Its availability and performance directly determine the availability and performance of everything behind it.

Horizontal scaling is the right model. Run multiple gateway instances behind a cloud load balancer. Because the gateway is designed to be stateless (all state lives in Redis for rate limiting or in the identity provider for authentication), adding instances is straightforward.

Rate limit state in Redis introduces a dependency. If Redis becomes unavailable, the gateway must decide whether to fail open (allow all requests) or fail closed (reject all requests). Failing open preserves availability but removes rate limiting protection. Failing closed is safe but causes a complete outage. Most teams configure a grace mode that allows requests through for a short period during Redis unavailability while alerting on the degradation.

Cache gateway configuration aggressively. Route definitions and API key lookups that require a datastore read on every request are a common performance bottleneck. In-memory caching of configuration with a TTL that is short enough to reflect changes within seconds eliminates this bottleneck without compromising consistency.

The Kong documentation at docs.konghq.com covers the full plugin configuration reference for rate limiting, authentication, and load balancing, and is the most reliable reference for teams implementing Kong-based gateways in production.

Putting It Together: A Deployment Checklist

Before pushing a gateway configuration to production, these are the checks that prevent the most common failure modes:

- Rate limits are configured at both per-IP and per-key granularity, with separate limits for authenticated and unauthenticated traffic

- Circuit breakers are enabled for all backend services with thresholds derived from measured error rates under normal load

- JWT public keys are loaded from a JWKS endpoint so that key rotation does not require a gateway restart

- All API keys are stored as hashed values and scope restrictions are enforced per key

- Timeouts are set based on measured backend p99 latency, not arbitrary defaults

- Structured logging and Prometheus metrics are confirmed to be emitting on a test request before traffic is routed

- A load test against the gateway has been run to confirm that the rate limiter and Redis dependency hold under expected peak traffic

- Failover behavior for Redis unavailability has been explicitly tested and the decision between fail-open and fail-closed has been documented

These steps are not exhaustive, but they cover the failure modes that cause the most production incidents in gateway deployments. A gateway that handles authentication, rate limiting, and traffic management correctly is largely invisible during normal operation, which is exactly what it should be.

Planning or Scaling Your API Gateway Infrastructure? Askan Technologies Delivers End-to-End Backend Architecture

Most popular pages

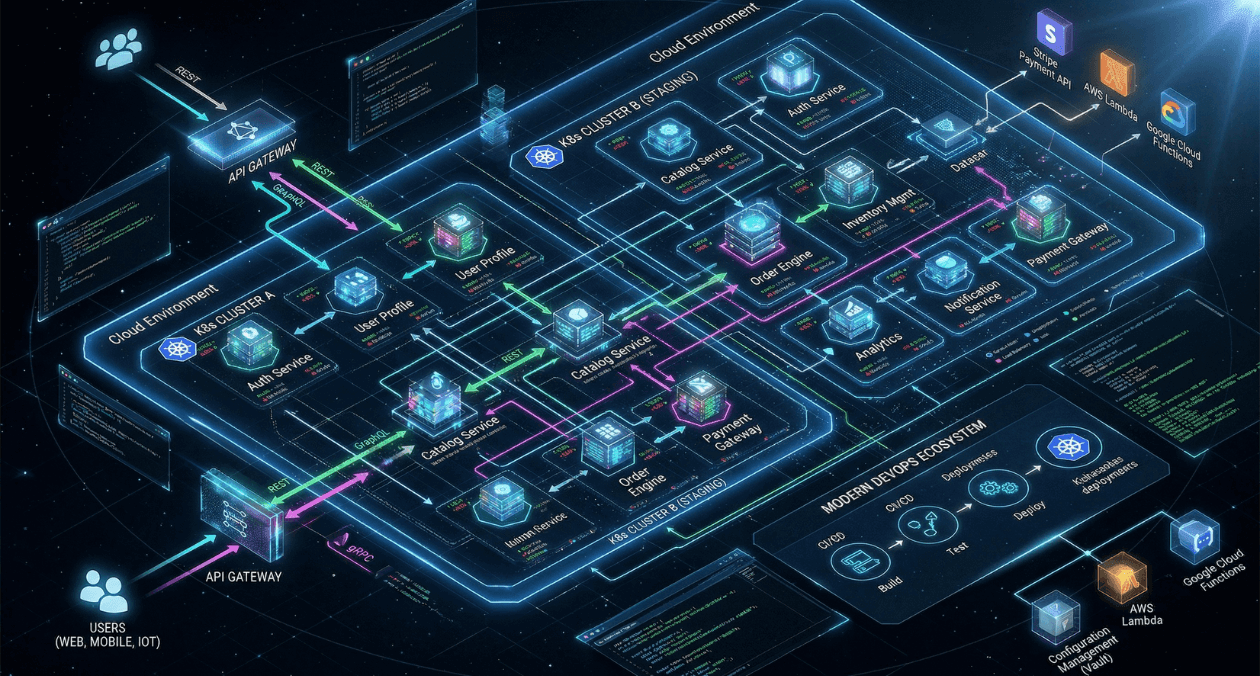

GraphQL vs REST vs gRPC: API Architecture Patterns for Microservices in 2026

Choosing an API style is one of those decisions that compounds over time. Pick the wrong one and you end up fighting the framework...

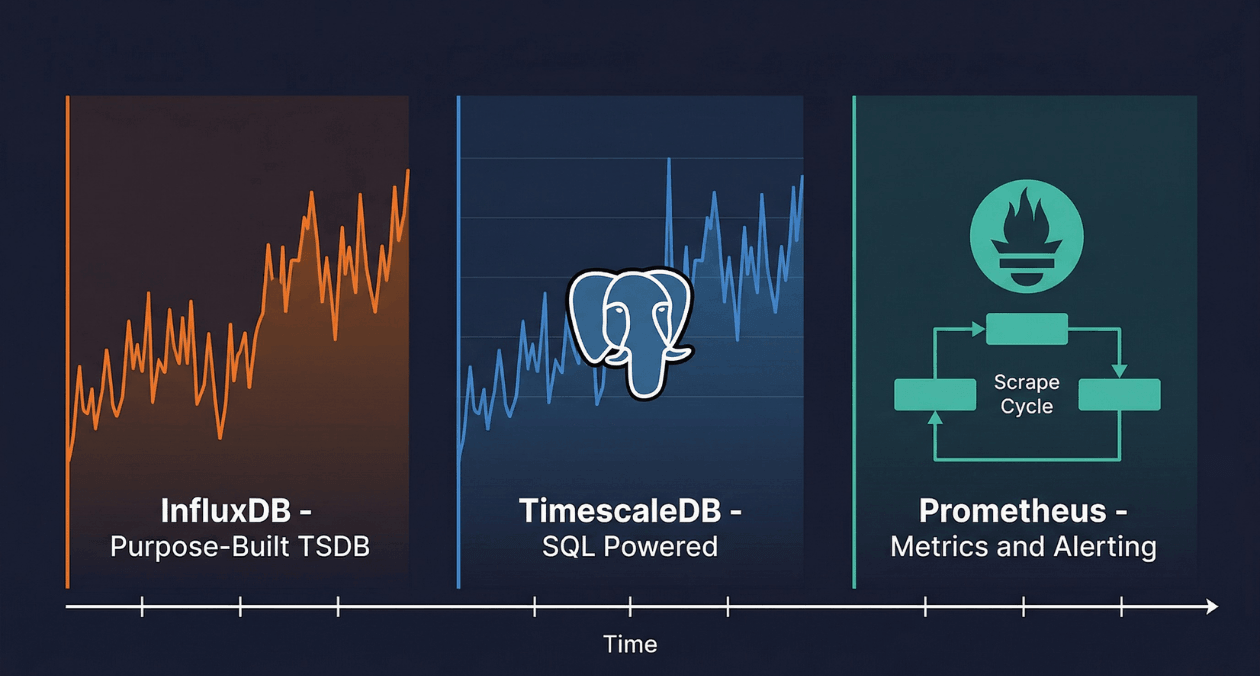

Time-Series Databases: InfluxDB vs TimescaleDB vs Prometheus for IoT and Monitoring

A temperature sensor on a factory floor records a reading every five seconds. A Kubernetes cluster emits thousands of metrics per minute from every...

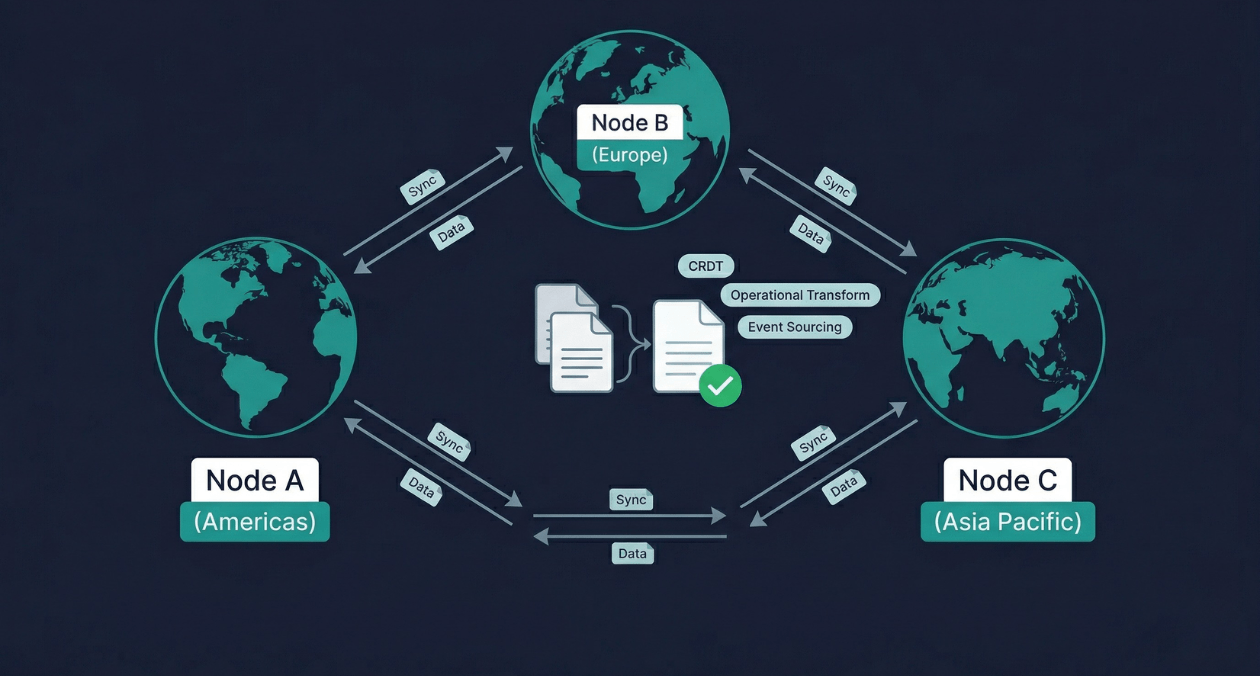

Real-Time Data Sync Across Distributed Systems: CRDT, Operational Transform, and Event Sourcing

Two users open the same document from different continents. One adds a sentence at the top. The other deletes a paragraph in the middle....