TABLE OF CONTENTS

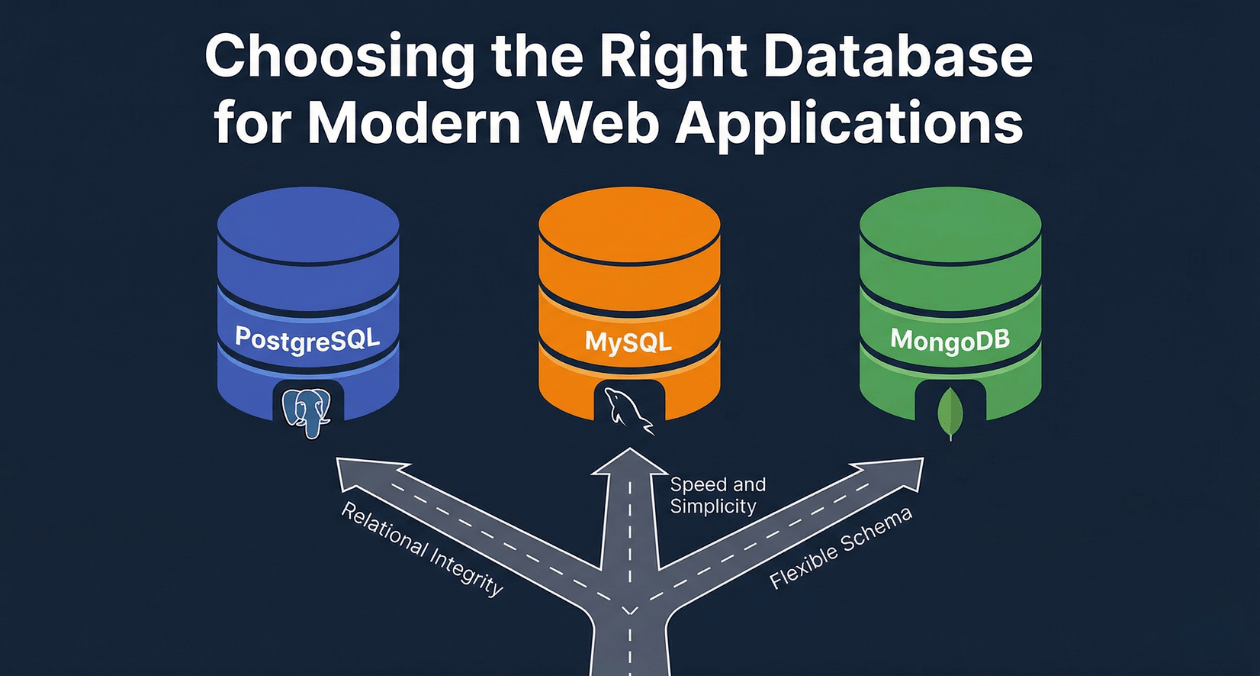

PostgreSQL vs MySQL vs MongoDB: Database Selection for Modern Web Applications

The database you choose for a web application is one of the most consequential architectural decisions your team will make. Unlike a framework or a deployment tool that can be swapped out incrementally over time, migrating a live production database is a high-risk, high-effort operation that most teams undertake only under real pain. Getting the selection right at the beginning of a project compresses years of future engineering effort.

The three databases that appear at the center of nearly every modern web application decision are PostgreSQL, MySQL, and MongoDB. Each has spent decades in production environments, accumulated rich ecosystems of tooling and expertise, and evolved features that blur the original lines between them. PostgreSQL now handles document-style JSON natively. MongoDB added multi-document ACID transactions. MySQL ships with a JSON column type and window functions.

Despite this convergence, the three databases still make fundamentally different trade-offs in their architecture, consistency guarantees, query capabilities, and operational characteristics. Understanding those trade-offs in depth, rather than relying on simplified rule-of-thumb comparisons, is what enables backend architects, database architects, and CTOs to make selections they will not regret when the application is under real production load.

This guide covers each database’s technical architecture, where each genuinely excels, where each shows its limitations, and the decision framework that experienced teams use to map application requirements to the right database choice.

The Foundational Distinction: Relational vs. Document Model

PostgreSQL and MySQL are relational databases. They organize data into tables with defined columns, enforce relationships through foreign keys, and express data retrieval through SQL, a declarative language that has been standardized across decades of database technology. The relational model is grounded in a mathematical framework developed by Edgar Codd in 1970, and its durability as the dominant data management paradigm reflects how well that framework maps to the structure of most business data.

MongoDB is a document database. It stores data as BSON documents (a binary representation of JSON) organized into collections rather than tables. Documents within the same collection can have different fields. There is no enforced schema at the database level by default, though MongoDB’s schema validation feature can enforce structure when required. Relationships between documents are expressed either through embedding related data within a document or through application-level references between documents.

This architectural distinction shapes almost everything else about how the two database categories behave: query expressiveness, consistency guarantees, scaling patterns, operational tooling, and the kind of application designs they reward.

PostgreSQL: The Full-Featured Relational Powerhouse

Architecture and Core Strengths

PostgreSQL, originally developed at UC Berkeley and released as open source in 1996, has evolved into the most feature-rich open-source relational database available. Its development philosophy prioritizes standards compliance, extensibility, and correctness over raw speed. That philosophy has produced a database capable of handling workloads that would require specialized commercial databases from other vendors.

At its core, PostgreSQL implements a process-per-connection model where each client connection spawns a backend process. Connection poolers like PgBouncer are standard in production deployments to manage the overhead of this model under high concurrency. Its storage engine uses multi-version concurrency control (MVCC) to handle concurrent reads and writes without locking, allowing read queries to proceed without blocking write operations.

The ACID compliance of PostgreSQL is comprehensive and reliable. Atomicity, consistency, isolation, and durability guarantees extend to the most complex multi-table transactions. Its default transaction isolation level is Read Committed, and it supports Serializable Snapshot Isolation for workloads that require the highest consistency guarantees, an isolation level that prevents all classes of serialization anomalies including phantom reads and write skew.

Advanced Features That Set PostgreSQL Apart

| Feature | Practical Application |

|---|---|

| JSONB column type | Store and index semi-structured JSON data alongside relational data in the same table |

| Full-text search (tsvector/tsquery) | Built-in search indexing without requiring Elasticsearch for moderate-scale search workloads |

| CTEs and window functions | Complex analytical queries, running totals, rankings, and lag/lead calculations in pure SQL |

| Table partitioning | Automatic partition pruning for time-series and high-volume tables |

| Foreign Data Wrappers (FDW) | Query external data sources (MySQL, MongoDB, S3, CSV) as if they are local tables |

| PostGIS extension | Full geospatial data support: points, polygons, spatial indexing, and geographic queries |

| Logical replication | Replicate specific tables to downstream databases for selective data distribution |

| Row-level security (RLS) | Database-enforced access control at the row level, independent of application logic |

When PostgreSQL Is the Right Choice

PostgreSQL is the natural default for applications where data integrity is the primary concern and query complexity is expected to grow over time. Financial systems, e-commerce order management, healthcare record systems, SaaS platforms with complex billing relationships, and any application where the cost of data corruption is high are all strong PostgreSQL use cases.

The JSONB column type makes PostgreSQL viable for workloads that require flexible schema alongside relational data, reducing the argument for a separate document database in many mixed-workload scenarios. An application that stores structured order data relationally and attaches arbitrary metadata to each order as JSONB can handle both requirements in a single PostgreSQL instance.

PostgreSQL’s full-text search, while not a replacement for Elasticsearch at very large scale, handles search workloads up to tens of millions of rows without external dependencies. For applications where search is one feature among many rather than the core product, avoiding a separate search infrastructure layer reduces operational complexity significantly.

PostgreSQL Limitations to Understand

PostgreSQL’s process-per-connection model makes it memory-intensive under high connection counts. A deployment receiving 5,000 concurrent connections without a pooler can exhaust server memory before any application work is done. PgBouncer or pgpool-II are not optional in high-concurrency production environments; they are required infrastructure.

Horizontal write scaling requires application-level sharding or extensions like Citus, which transforms PostgreSQL into a distributed database. Native PostgreSQL does not distribute write load across nodes the way MongoDB does. For write-heavy workloads that genuinely exceed single-node capacity, this requires additional architectural investment.

Need Help Choosing the Right Database Architecture?

MySQL: The Battle-Tested Web Database

Architecture and Historical Context

MySQL, originally developed by MySQL AB in the mid-1990s and now maintained by Oracle, became the dominant database for web applications during the LAMP stack era. Its reputation for simplicity, speed on read-heavy workloads, and the enormous ecosystem of tutorials, managed hosting options, and developer familiarity built around it made it the first database most web developers encountered.

MySQL’s architecture centers on its pluggable storage engine model. The InnoDB storage engine, the default since MySQL 5.5, provides ACID transactions, row-level locking, and foreign key enforcement. The MyISAM engine, now largely historical, provided faster read performance without transaction support. The storage engine abstraction gave MySQL flexibility in how it handled different workload types, though in modern usage InnoDB handles the overwhelming majority of production workloads.

MySQL’s replication architecture is mature and extensively documented. Its binary log-based replication supports multiple replicas per primary, delayed replicas for point-in-time recovery, and read replica fan-out for distributing read load. The Group Replication and MySQL InnoDB Cluster features provide high-availability configurations with automatic failover.

MySQL Strengths in Production

| Strength | Detail |

|---|---|

| Read replica scaling | Mature binary log replication, easy fan-out to multiple read replicas |

| Managed cloud availability | First-class support on AWS RDS, Aurora, Google Cloud SQL, Azure Database |

| WordPress and CMS ecosystem | Dominant database for WordPress, Drupal, Joomla, Magento, and most PHP CMSs |

| Operational familiarity | Largest pool of database administrators and developer familiarity globally |

| Aurora compatibility | AWS Aurora’s MySQL-compatible engine offers up to 5x throughput on read-heavy workloads |

MySQL vs. PostgreSQL: Where the Lines Fall

The practical differences between MySQL and PostgreSQL have narrowed significantly over the past decade, but meaningful distinctions remain in specific areas.

| Dimension | MySQL | PostgreSQL |

|---|---|---|

| Default transaction isolation | Repeatable Read | Read Committed |

| JSON support | JSON column type, limited indexing | JSONB with full GIN/GiST indexing |

| Full-text search | Basic FULLTEXT index | tsvector with language-aware stemming |

| Window functions | Supported since MySQL 8.0 | Comprehensive, available longer |

| Geospatial support | Basic spatial types and functions | PostGIS extension: production-grade |

| Extensibility | Limited plugin model | Rich extension ecosystem (1,000+ extensions) |

| Standards compliance | Moderate SQL standard compliance | Very high SQL standard compliance |

| Connection handling | Thread-per-connection (lighter) | Process-per-connection (heavier) |

When MySQL Is the Appropriate Choice

MySQL is a strong choice when the application is being built on a technology stack where MySQL integration is deeply established, particularly PHP-based applications, WordPress sites, Magento e-commerce platforms, and any application that needs to run on shared hosting environments where MySQL is the only available database option.

The AWS Aurora MySQL-compatible engine is a compelling choice for applications already running on AWS infrastructure that need higher throughput on read-heavy workloads without re-architecting for PostgreSQL. Aurora’s storage layer is decoupled from the compute layer, allowing read replicas to serve data from the same underlying storage without traditional replication lag, and its auto-scaling read replica feature adjusts replica count automatically based on connection load.

Teams inheriting existing MySQL codebases should generally continue with MySQL unless a specific technical requirement, full-text search quality, PostGIS geospatial support, or complex JSON querying, makes the migration overhead worth the investment.

MongoDB: The Document Database for Flexible-Schema Workloads

Architecture and Data Model

MongoDB stores data as BSON documents within collections. A collection is roughly analogous to a relational table, but the analogy breaks down quickly: documents within the same collection can have entirely different fields, nested objects, and arrays at any depth. This schema flexibility is MongoDB’s defining characteristic and the source of both its primary advantage and its primary risk.

MongoDB’s distributed architecture was designed from the ground up for horizontal scaling. Sharding distributes documents across multiple shards based on a shard key, which determines how data is partitioned. Each shard is typically a replica set: a primary node that handles writes and a configurable number of secondary nodes that can serve reads. The mongos router transparently routes queries to the appropriate shards, making the distributed cluster appear as a single database to the application.

MongoDB’s Genuine Strengths

Schema flexibility is MongoDB’s clearest advantage in situations where the data model is genuinely variable or evolving rapidly. A product catalog where different product categories (electronics, clothing, perishables) have completely different attribute sets is a natural MongoDB use case. Storing all product types in a single relational table requires either nullable columns for every category-specific attribute, a complex entity-attribute-value schema, or a JSONB column in PostgreSQL. MongoDB’s document model handles this natively without schema complexity.

The aggregation pipeline is MongoDB’s answer to SQL analytics. It chains transformation stages: match, group, sort, project, lookup (left outer join equivalent), unwind (deconstructs arrays), and facet (multi-dimensional grouping). For document-centric analytics, the pipeline is expressive and performs well. For complex relational analytics involving many collections, SQL window functions and CTEs in PostgreSQL tend to be more expressive and better optimized.

| MongoDB Strength | Where It Delivers Real Value |

|---|---|

| Variable schema per document | Product catalogs, IoT event streams, CMS with custom fields |

| Embedded document model | One-to-few relationships that are always queried together |

| Horizontal write sharding | Write-heavy workloads that exceed single-node capacity |

| Atlas Search (Lucene-based) | Integrated full-text and vector search without separate infrastructure |

| Change streams | Real-time event streaming from database operations to downstream consumers |

| Time-series collections | Native optimized storage for time-series workloads (added in MongoDB 5.0) |

MongoDB Limitations That Matter in Practice

MongoDB’s multi-document transaction support, added in version 4.0, provides ACID guarantees across multiple documents and collections. However, multi-document transactions in MongoDB carry higher performance overhead than in relational databases and should be used selectively rather than as a default. Applications that require frequent cross-entity transactions as a fundamental part of their data model are better served by a relational database designed around that use case.

The absence of enforced schema at the database level is a double-edged characteristic. In teams with strong data discipline and application-layer validation, it accelerates development. In teams without that discipline, it produces data quality problems that accumulate silently and become increasingly expensive to correct as the dataset grows. Schema validation through MongoDB’s $jsonSchema validator and frameworks like Mongoose for Node.js applications mitigate this risk but require deliberate adoption.

The JOIN equivalent in MongoDB (the $lookup aggregation stage) is less performant than relational JOINs for complex multi-collection queries. MongoDB’s document model assumes that data that is frequently queried together is embedded together, rather than normalized into separate collections and joined at query time. Applications that normalize MongoDB data heavily because the team is accustomed to relational design often end up with the operational overhead of MongoDB without the performance benefits of its embedded document model.

Need Help Choosing the Right Database Architecture?

Head-to-Head: Core Dimension Comparison

ACID Compliance and Transaction Model

| Database | Transaction Scope | Isolation Levels |

|---|---|---|

| PostgreSQL | Full multi-table ACID with SSI | Read Committed, Repeatable Read, Serializable |

| MySQL (InnoDB) | Full multi-table ACID | Read Uncommitted, Read Committed, Repeatable Read, Serializable |

| MongoDB | Multi-document ACID (v4.0+) | Snapshot isolation within transactions |

Query Language and Expressiveness

| Capability | PostgreSQL / MySQL | MongoDB |

|---|---|---|

| Ad-hoc queries | Full SQL: any filter, join, aggregation without schema change | Aggregation pipeline, MQL: expressive but document-centric |

| Multi-entity joins | Native JOIN with optimizer support | $lookup available, limited optimizer support |

| Analytical queries | Window functions, CTEs, materialized views | Aggregation pipeline, $facet, limited window equivalents |

| Full-text search | Built-in (PostgreSQL tsvector / MySQL FULLTEXT) | Atlas Search (Lucene, requires Atlas or self-managed) |

| Geospatial queries | PostGIS (PostgreSQL), basic spatial (MySQL) | 2dsphere index, GeoJSON support |

Scaling Architecture

| Scaling Type | PostgreSQL | MySQL |

|---|---|---|

| Read scaling | Read replicas (streaming replication) | Read replicas (binary log replication / Aurora) |

| Write scaling | Application sharding or Citus extension | Application sharding or Vitess |

| Auto-sharding | Not native (Citus or manual) | Not native (Vitess or manual) |

| MongoDB Scaling | Characteristics |

|---|---|

| Read scaling | Secondary reads, read preference routing |

| Write scaling | Native horizontal sharding with auto-balancing |

| Auto-sharding | Native, managed by mongos router and config servers |

Operational and Cloud Ecosystem

| Dimension | PostgreSQL | MySQL |

|---|---|---|

| Managed cloud services | AWS RDS, Aurora PostgreSQL, Google Cloud SQL, Azure | AWS RDS, Aurora MySQL, Google Cloud SQL, Azure |

| Kubernetes operators | CloudNativePG, Zalando operator, Crunchy Data | MySQL Operator for Kubernetes, Percona Operator |

| Backup tools | pgBackRest, Barman, WAL-G | Percona XtraBackup, mysqldump, binary log backup |

| Connection pooling | PgBouncer, pgpool-II | ProxySQL, MySQL Router |

| MongoDB Operations | Detail |

|---|---|

| Managed cloud | MongoDB Atlas (multi-cloud: AWS, GCP, Azure) |

| Kubernetes operator | MongoDB Community and Enterprise operators |

| Backup | Atlas continuous backup, mongodump, Ops Manager |

Performance Characteristics by Workload Type

Performance comparisons between PostgreSQL, MySQL, and MongoDB are only meaningful in the context of a specific workload. General benchmark numbers without workload context mislead more than they inform. The following breakdown maps workload types to typical performance characteristics based on production behavior patterns.

| Workload Type | Best Performer | Reasoning |

|---|---|---|

| Simple key-value lookups (PK) | MySQL (marginally) or MongoDB | Thread model and document fetch are efficient for direct lookups |

| Complex analytical queries | PostgreSQL | Window functions, CTEs, query planner maturity |

| High-concurrency writes (single node) | MySQL InnoDB or PostgreSQL | Both handle concurrent writes well with MVCC |

| Horizontal write scaling beyond one node | MongoDB | Native sharding without extensions |

| Full-text search at moderate scale | PostgreSQL (tsvector) | No external dependency, good enough for millions of rows |

| Variable-schema document storage | MongoDB | Native document model, no ALTER TABLE required |

| Financial / transactional workloads | PostgreSQL | SSI, mature ACID, reliable constraint enforcement |

| Read-heavy with many replicas | MySQL Aurora | Aurora storage decoupling eliminates replication lag |

Indexing Strategies Across All Three Databases

Indexing is the single most impactful factor in query performance after schema design. Each database supports a different indexing vocabulary that reflects its underlying data model.

PostgreSQL Indexing

PostgreSQL supports the broadest set of index types of any open-source database. B-tree indexes serve the standard case: equality and range queries on scalar values. GIN (Generalized Inverted Index) indexes support JSONB containment queries, full-text search, and array operations. GiST (Generalized Search Tree) indexes support geometric and geospatial queries through PostGIS. BRIN (Block Range Index) indexes provide very compact indexing for large tables where values are physically ordered, such as time-series tables where new rows are always appended with increasing timestamps.

| PostgreSQL Index Type | Best Used For |

|---|---|

| B-tree (default) | Equality, range, ORDER BY on scalar columns |

| GIN | JSONB containment, array operations, full-text search |

| GiST | Geometric shapes, PostGIS spatial data, range types |

| BRIN | Large append-only tables with naturally ordered values (timestamps) |

| Hash | Equality-only lookups where range queries never occur |

| Partial index | Index a subset of rows matching a condition (e.g., WHERE status = active) |

MongoDB Indexing

MongoDB indexes operate on document fields, including fields nested inside embedded documents and array elements. A single-field index on a top-level field behaves identically to a relational B-tree index. A multikey index on an array field indexes each element of the array individually, allowing queries that filter by array contents to use the index. A compound index covers multiple fields, and its field order determines which query patterns it supports, following the same ESR (Equality, Sort, Range) rule that applies to relational compound indexes.

| MongoDB Index Type | Best Used For |

|---|---|

| Single field | Equality and range queries on one field |

| Compound | Queries filtering and sorting on multiple fields |

| Multikey | Queries filtering by values inside array fields |

| Text (Atlas Search preferred) | Full-text search within string fields |

| 2dsphere | Geospatial queries on GeoJSON coordinates |

| Partial | Index documents matching a filter expression |

Need Help Choosing the Right Database Architecture?

Selecting a Database for Specific Application Types

SaaS Platform with Multi-Tenancy

Multi-tenant SaaS applications commonly store data for hundreds or thousands of customer accounts within the same database. The isolation model (schema-per-tenant, row-level-security-per-tenant, or database-per-tenant) has significant implications for database selection. PostgreSQL’s row-level security feature enforces tenant isolation at the database level, making it difficult for a query to accidentally return rows belonging to a different tenant. This database-enforced guarantee is materially stronger than application-level filtering alone.

For SaaS platforms with complex subscription billing, usage-based pricing calculations, and audit trail requirements, PostgreSQL’s full ACID compliance and window function support for usage aggregation make it the natural choice.

E-Commerce Platform

E-commerce order management requires strict transaction integrity: inventory decrements, order creation, and payment records must succeed or fail atomically. Both PostgreSQL and MySQL InnoDB provide the multi-table transaction support this requires. MySQL remains popular for e-commerce because of the Magento ecosystem and the widespread availability of managed MySQL hosting.

MongoDB’s document model can handle product catalog management effectively, particularly for catalogs with highly variable attribute sets across product categories. A hybrid architecture with PostgreSQL handling order management and transactional data while MongoDB stores the product catalog is a pattern used in large e-commerce platforms, though the operational complexity of maintaining two database systems requires justification by the scale of the catalog variability problem.

The custom e-commerce solutions built by Askan Technologies across B2B and B2C platforms leverage both MySQL and PostgreSQL depending on the platform’s existing ecosystem, hosting requirements, and the complexity of the inventory and order management layer.

Real-Time Application with High Write Throughput

Applications that ingest events at high volume, IoT telemetry, user activity streams, financial tick data, or chat message history, can overwhelm single-node write capacity at sufficient scale. MongoDB’s native horizontal sharding handles this class of workload more naturally than PostgreSQL or MySQL, which require application-level sharding or third-party tooling like Vitess or Citus to achieve comparable write distribution.

MongoDB 5.0’s native time-series collections provide automatic bucketing, compressed storage, and a query optimizer aware of time-series access patterns that significantly outperforms general-purpose collection storage for this workload type.

Content Management and Headless CMS

Content management systems with user-defined content types, custom fields, and multilingual content structures benefit from document database flexibility. Each content type definition can map to a different document schema without requiring schema migrations every time an editor adds a custom field. This is a case where MongoDB or PostgreSQL with JSONB genuinely eliminates operational friction compared to a strictly normalized relational schema.

The web application development practice at Askan Technologies frequently encounters CMS and content-heavy application requirements where the choice between a structured relational schema and a flexible document model directly affects the speed of content model evolution during early-stage product development.

The Polyglot Persistence Pattern

Large production systems rarely use a single database for all their data. The polyglot persistence pattern assigns different data stores to different data types based on access patterns and consistency requirements. A single application might use PostgreSQL for transactional business data, MongoDB for product catalog and content, Redis for session storage and caching, and Elasticsearch for full-text search.

The benefit of polyglot persistence is that each data type is served by the most appropriate store. The cost is operational: each additional database adds infrastructure to manage, monitor, back up, and upgrade. Each adds a failure mode, a connection pool, and a expertise requirement for the engineering team.

The pragmatic approach is to begin with the single database that best fits the primary data model of the application and add specialized stores only when a specific capability gap becomes a genuine constraint. Starting with PostgreSQL for a transactional application and adding Redis for session caching when connection overhead becomes measurable is sensible incremental architecture. Starting with three databases on day one because the architecture diagram looks comprehensive is premature complexity.

| Data Type | Recommended Store | Rationale |

|---|---|---|

| Transactional business data | PostgreSQL | ACID, complex queries, referential integrity |

| Session and ephemeral data | Redis | Sub-millisecond access, TTL support, no persistence needed |

| Full-text search at scale | Elasticsearch / OpenSearch | Inverted index at billion-document scale |

| Variable-schema documents | MongoDB or PostgreSQL JSONB | Depends on whether relational data coexists |

| Time-series metrics | TimescaleDB (PostgreSQL extension) or InfluxDB | Compression and time-range query optimization |

| Geospatial data | PostgreSQL + PostGIS | Most capable open-source geospatial engine |

Decision Framework: Choosing Among the Three

The following questions form a practical decision path for database selection at the architecture stage.

| Question | Implication for Database Choice |

|---|---|

| Does your data have well-defined relationships that must be enforced? | Yes: relational database. Strong case for PostgreSQL. |

| Do you need complex analytical queries across multiple entities? | Yes: PostgreSQL for window functions and CTEs. |

| Is your schema genuinely variable across records in the same collection? | Yes: MongoDB or PostgreSQL JSONB. |

| Do you need horizontal write scaling beyond a single node today? | Yes: MongoDB with sharding, or plan for Citus/Vitess. |

| Is your stack PHP-based or running on a platform with MySQL defaults? | MySQL is often the path of least resistance. |

| Do you need geospatial queries at any meaningful complexity? | PostgreSQL with PostGIS is the clear choice. |

| Is data integrity under concurrent writes the highest priority? | PostgreSQL with Serializable Snapshot Isolation. |

| Is this a new project with an evolving data model in early stages? | MongoDB or PostgreSQL both work; team familiarity decides. |

One consideration that this framework cannot capture is team expertise. A team with three senior PostgreSQL engineers and no MongoDB experience will deliver a better outcome with PostgreSQL even for a workload that theoretically maps better to MongoDB. Database selection that ignores operational expertise typically produces systems that are architecturally optimal and operationally mismanaged.

For teams evaluating architecture decisions at scale, the database comparison insights in this article connect directly to the broader data infrastructure discussion covered in the upcoming March 26 article on data warehouse, data lake, and data lakehouse architectures, part of the ongoing Database Architecture and Performance series on the Askan Technologies blog.

Most popular pages

From Prototype to Production: The Engineering Checklist That Actually Matters

Prototypes lie. They perform well in demos because they are not doing any of the work that production systems actually do. There is no...

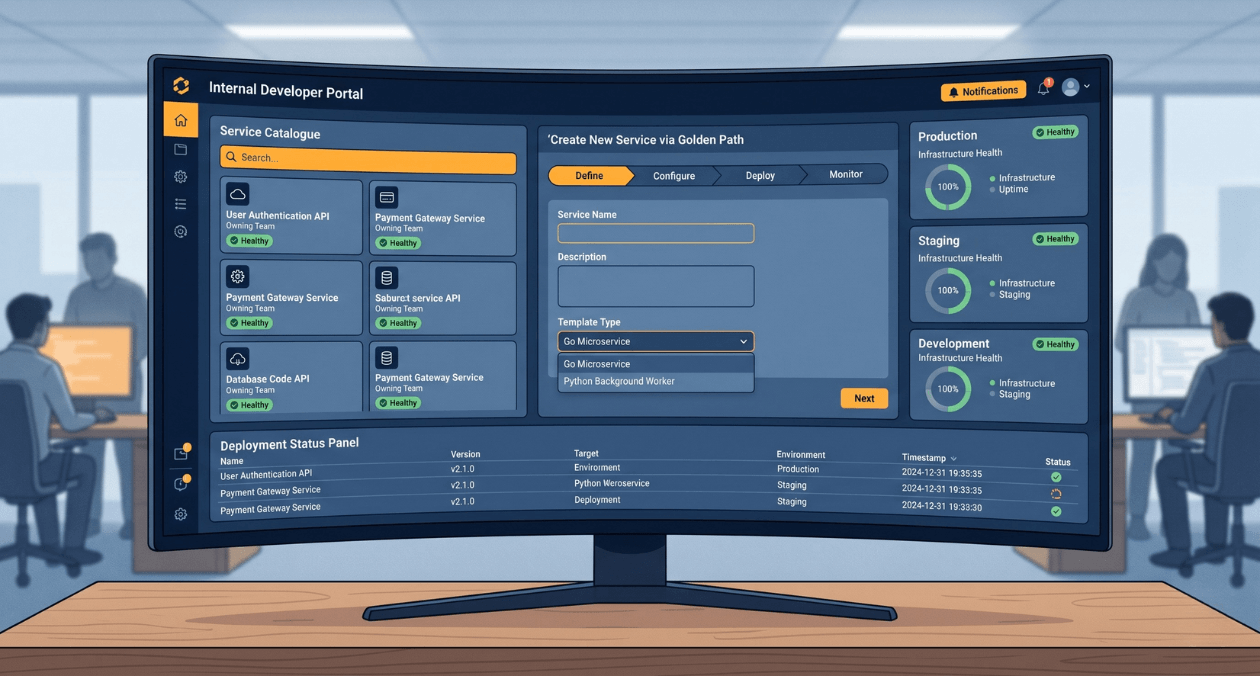

Building a Developer Experience (DX) Platform: From Golden Paths to Self-Service Infrastructure

There is a measurement problem at the heart of platform engineering. The people who benefit most from a well-built internal developer platform are often...

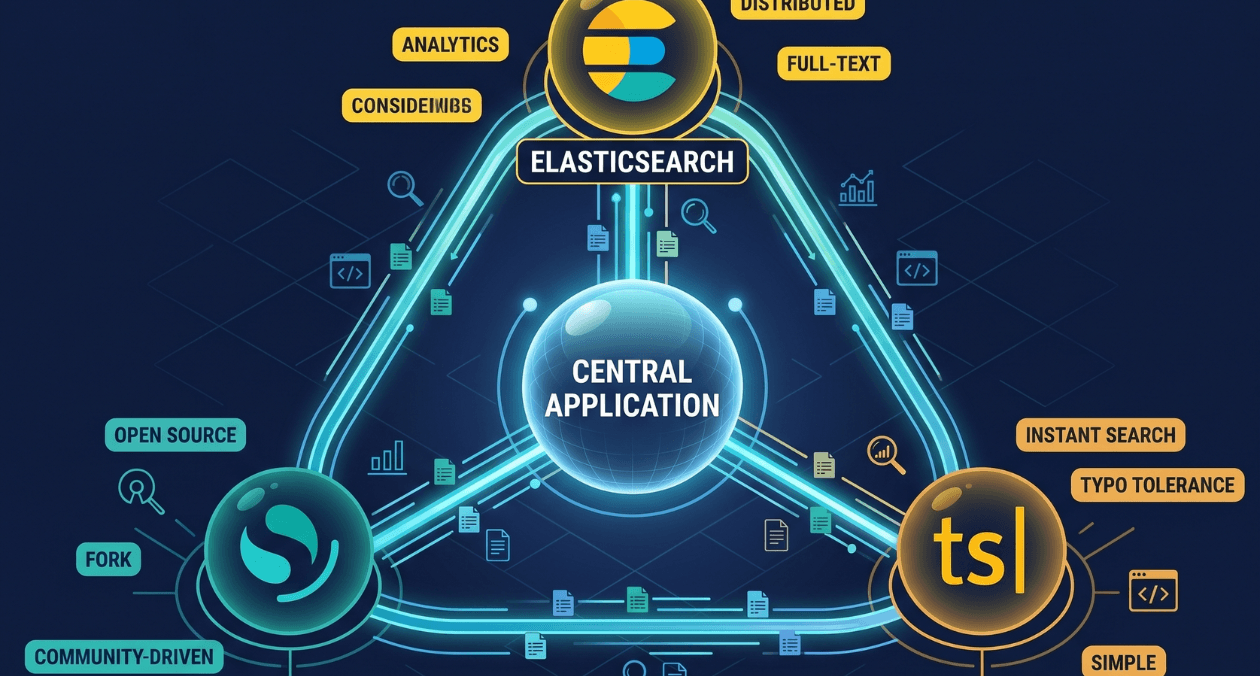

Search Infrastructure for Applications: Elasticsearch vs OpenSearch vs Typesense

Search is one of those features that seems straightforward until you try to build it properly. A basic LIKE query handles small datasets. The...