TABLE OF CONTENTS

Rust for Backend Services: When to Use It and When to Stick with Go or Node

The backend language debate has never been more alive than it is in 2026. Rust has gone from a systems programming curiosity to a serious contender for production backend services at companies ranging from early-stage startups to engineering organizations running hundreds of microservices. The question no longer is whether Rust is capable. It clearly is. The more useful question for backend engineers, CTOs, and tech leads is a practical one: when does the tradeoff actually make sense?

Go and Node.js have matured tremendously. Go ships with battle-tested concurrency primitives, a fast compile cycle, and an ecosystem that has stabilized around tooling and observability. Node.js has shed its early reputation for callback hell and now powers some of the most performant API gateways and streaming services on the internet. Neither is going away. So choosing Rust for your next backend service is a genuine architectural decision, not just a technology trend to chase.

This article provides a decision framework built around real engineering constraints. We look at where Rust genuinely wins, where Go and Node still hold the advantage, and the signals that should guide your choice. If you are working through broader technology stack decisions, the software development technology trends landscape in 2025 and beyond offers useful context for where backend language choices fit within the larger picture.

What Makes Rust Different at the Backend Level

Rust was designed around a single guarantee: memory safety without a garbage collector. Every other language that claims to be safe either uses a GC (Go, Node via V8) or relies on developer discipline (C, C++). Rust uses a compile-time ownership model that eliminates entire classes of bugs: null pointer dereferences, use-after-free, data races, and buffer overflows. At runtime, there is no pause, no heap scan, and no GC pressure under load.

For backend services, this matters most when your system has tight latency constraints, high sustained throughput, or predictable memory behavior requirements. A service that handles financial transaction processing, real-time gaming state, embedded analytics, or low-latency order routing benefits from the absence of GC pauses in ways that are difficult to achieve with Go or Node without architectural gymnastics.

Rust’s async model, built around the Tokio runtime and the async/await syntax, is now mature enough for serious production use. Frameworks like Axum, Actix Web, and Warp give engineering teams solid foundations for HTTP services, and the ecosystem around database access (SQLx, Diesel), serialization (serde), and observability (OpenTelemetry, tracing) has reached a level of stability that was missing even two years ago.

Where Go Still Has the Edge

Go was built for engineers. The language is deliberately simple, the toolchain is excellent, and the learning curve is among the lowest for any compiled language. Go’s concurrency model, built on goroutines and channels, is both approachable and powerful. For most distributed systems work, Go is the pragmatic choice that gets teams productive quickly without giving up meaningful performance.

Go wins clearly in the following scenarios. First, when your team is hiring broadly and you need engineers to contribute quickly, Go’s simplicity is a real operational advantage. Second, when you are building CLI tooling, infrastructure agents, or Kubernetes operators, Go has a rich ecosystem and community convention that Rust cannot match yet. Third, when development velocity is the primary constraint, Go’s fast compile times and readable error messages reduce friction in daily engineering work.

There is also the ecosystem depth argument. The Go ecosystem for cloud-native tooling is enormous. If you are working with Kubernetes, Envoy, Terraform, or most of the modern infrastructure toolchain, Go is the native language. That gravitational pull matters when your backend services need to integrate tightly with platform-level infrastructure.

Rust vs Go: Key Tradeoffs at a Glance

| Dimension | Rust | Go |

|---|---|---|

| Memory model | Ownership, no GC, zero runtime pauses | GC with low-latency tuning options |

| Learning curve | Steep, borrow checker takes time | Gentle, readable by design |

| Concurrency | async/await via Tokio, threads | Goroutines and channels, ergonomic |

| Compile time | Slower, improves with incremental builds | Fast, developer-friendly |

| Ecosystem maturity | Growing fast, still catching up in places | Deep, especially for cloud-native work |

| Best for | Latency-critical, memory-sensitive services | Distributed systems, infrastructure, APIs |

Where Node.js Still Makes Sense in 2026

Node.js has a reputation that often works against it in architecture discussions, but the numbers tell a different story. Cloudflare Workers, Vercel’s edge functions, and a large portion of high-traffic API gateways globally run on Node.js or its runtime derivatives. The event loop model is genuinely excellent for I/O-bound workloads where the bottleneck is waiting on databases, external APIs, or file operations rather than CPU computation.

The case for Node.js in 2026 comes down to three things: JavaScript and TypeScript fluency across your entire engineering team enabling full-stack knowledge sharing, the NPM ecosystem giving access to the widest library surface of any backend runtime, and the developer experience in frameworks like Fastify, Hono, and NestJS that have become genuinely production-grade.

Node.js does not perform well for CPU-intensive workloads. Video transcoding, cryptographic operations at scale, machine learning inference, or any operation that saturates a single thread will expose Node’s limitations quickly. In those cases, offloading to a sidecar service written in Rust or Go is a common architectural pattern that preserves the productivity advantages of Node while not letting its constraints become a system-wide bottleneck.

Planning Your Backend Architecture?

The Decision Framework: Signals That Should Guide Your Choice

Rather than picking a language based on benchmarks alone, the more useful approach is to map the choice against the specific constraints of your service. Here is a framework built around the signals that matter most in practice.

Choose Rust When

Your service has hard latency SLAs in the sub-millisecond range and GC pauses are a real risk to those SLAs. Your service runs at sustained high throughput with memory constraints, such as embedded analytics, edge computing, or telemetry ingestion. Your team has or is willing to invest in Rust expertise and the service will live in production for a long time, making the upfront investment worthwhile. You need to eliminate a class of memory safety bugs and the correctness guarantees matter more than velocity in the early phases.

Choose Go When

You are building a service that needs to be maintained by a broader pool of engineers over time. Your service is a microservice with moderate throughput requirements and you need it shipped and iterated on quickly. You are building infrastructure tooling, background workers, or Kubernetes-adjacent services where Go’s ecosystem dominates. Your team has existing Go expertise and the switching cost to Rust is not justified by the performance delta.

Choose Node.js When

Your service is I/O-bound and the team is primarily TypeScript-fluent. You are building a BFF (Backend for Frontend) layer, a GraphQL gateway, or a webhook handler where developer velocity and ecosystem access matter more than raw throughput. You are iterating rapidly on a product and need the fastest possible feedback loop from code to production. The workload does not have CPU-intensive requirements that would expose the event loop’s constraints.

Decision Matrix: Service Type vs Language Fit

| Service Type | Recommended Language | Reason |

|---|---|---|

| Low-latency financial processing | Rust | No GC pauses, memory safety |

| Kubernetes operator / infra agent | Go | Rich ecosystem, fast compile, readable |

| GraphQL or REST API gateway | Node.js or Go | I/O-bound, fast development |

| Real-time game state server | Rust | Deterministic performance |

| Background job processing | Go | Goroutines, simple concurrency |

| Edge function / BFF layer | Node.js | V8 runtime, TS fluency, wide ecosystem |

The Real Cost of Choosing Rust

The decision to adopt Rust for backend services carries costs that go beyond the compile time and borrow checker learning curve. The most significant cost is hiring. The pool of engineers with production Rust experience is smaller than for Go or Node, and the ramp-up time for engineers coming from other languages is measured in months rather than weeks. This is not a reason to avoid Rust, but it is a reason to be honest about the organizational readiness required.

There are also ecosystem gaps that still exist. Certain observability tools, ORM patterns, and cloud SDK integrations are more polished in Go or Node. The async ecosystem in Rust is stabilizing, but the mental model of pinning, send bounds, and lifetime interactions with async traits still catches engineers who are new to the language. The good news is that the community is aware of these pain points and the tooling around async Rust in particular has improved substantially through 2025 and into 2026.

A pattern that works well for teams wanting to adopt Rust without a full rewrite is starting with a single latency-sensitive service. Identify the one service in your architecture that has the clearest performance constraint, staff it with engineers who are interested in Rust, and treat it as a controlled adoption experiment. This limits risk, builds institutional knowledge, and gives you real production data on whether the performance gains justify the investment in your specific environment. This mirrors the approach that many engineering teams take when adopting any new technology, similar to how teams evaluate performance-driven frontend framework decisions before committing to a full migration.

Polyglot Architecture: Using All Three Together

The framing of this as a binary choice is a useful simplification for decision making, but most mature engineering organizations end up with all three languages serving different parts of their architecture. A common pattern is Node.js or Go handling the API layer and developer-facing surfaces, Go managing infrastructure-adjacent services and background workers, and Rust handling the performance-critical inner loop: the ingestion pipeline, the real-time processing engine, or the data-plane service that sits on the hot path.

The key to making polyglot architecture work is discipline around service boundaries. If each language is contained within a well-defined service with a clear contract (gRPC, HTTP, or a message queue), the polyglot cost is manageable. The problems emerge when language choices bleed across service boundaries or when a team expects engineers to maintain fluency across all three simultaneously. Organizational design and team topology matter as much as technical capability when running multiple languages in production.

According to the 2024 Stack Overflow Developer Survey, Rust continues to be the most admired language among developers, with over 80% of respondents who have used it wanting to continue using it. This is a meaningful signal about developer satisfaction, which translates to retention and quality of work on Rust codebases over time.

Operational Considerations Across the Three Runtimes

| Factor | Rust | Go / Node.js |

|---|---|---|

| Binary size | Small, statically linked | Go: small; Node: requires runtime |

| Container startup | Near-instant | Go: fast; Node: moderate |

| Memory footprint | Very low, predictable | Go: low; Node: moderate, variable |

| Observability tooling | Growing, OpenTelemetry support strong | Mature, rich ecosystem both |

| Hiring pool | Smaller, growing | Large for both |

Planning Your Backend Architecture?

What Engineering Teams Often Get Wrong

The most common mistake is treating the language decision as a performance optimization rather than an architecture decision. Performance is one dimension. But maintainability, team capability, ecosystem fit, and operational overhead are all equally real constraints that affect the long-term cost of the system. A Rust service that runs 30% faster than its Go equivalent but is understood by only two engineers in a team of twenty is not obviously a better engineering outcome.

Another mistake is premature adoption. Many teams have reached for Rust because it felt like the right technical choice, before the real performance bottleneck was understood. Profiling and measurement should always precede language selection. In many cases, the actual bottleneck is database query patterns, network topology, or poor caching strategy rather than the runtime language. Solving the right problem first is more valuable than solving the most technically interesting one.

A related principle applies when teams evaluate cross-platform development frameworks. As explored in the Flutter vs React Native performance comparison, the right tool is often the one that aligns best with your team’s skills and your product’s specific performance requirements rather than the one with the highest benchmark scores.

Making the Call in Practice

If you are a backend engineer or tech lead evaluating this decision today, here is a practical starting point. Profile your current services and identify where latency, throughput, or memory constraints are causing real production problems. Not theoretical ones. Real ones with data behind them. Then map those constraints against the decision framework above.

If Rust emerges as the right fit for one or two services, run a time-boxed experiment. Pick engineers who are motivated to learn, give them three months with a clearly scoped service, and measure the outcome against concrete performance and reliability metrics. Let the data from that experiment inform the broader adoption decision rather than making a top-down call to rewrite existing services.

Rust for backend services in 2026 is no longer a gamble. It is a mature, well-supported choice for the right problems. The engineering skill is knowing which problems those are. Go and Node.js remain excellent choices for the vast majority of backend work that does not require deterministic, GC-free performance. The goal is matching the tool to the constraint, not winning an argument about which language is better in the abstract.

Planning Your Backend Architecture?

Most popular pages

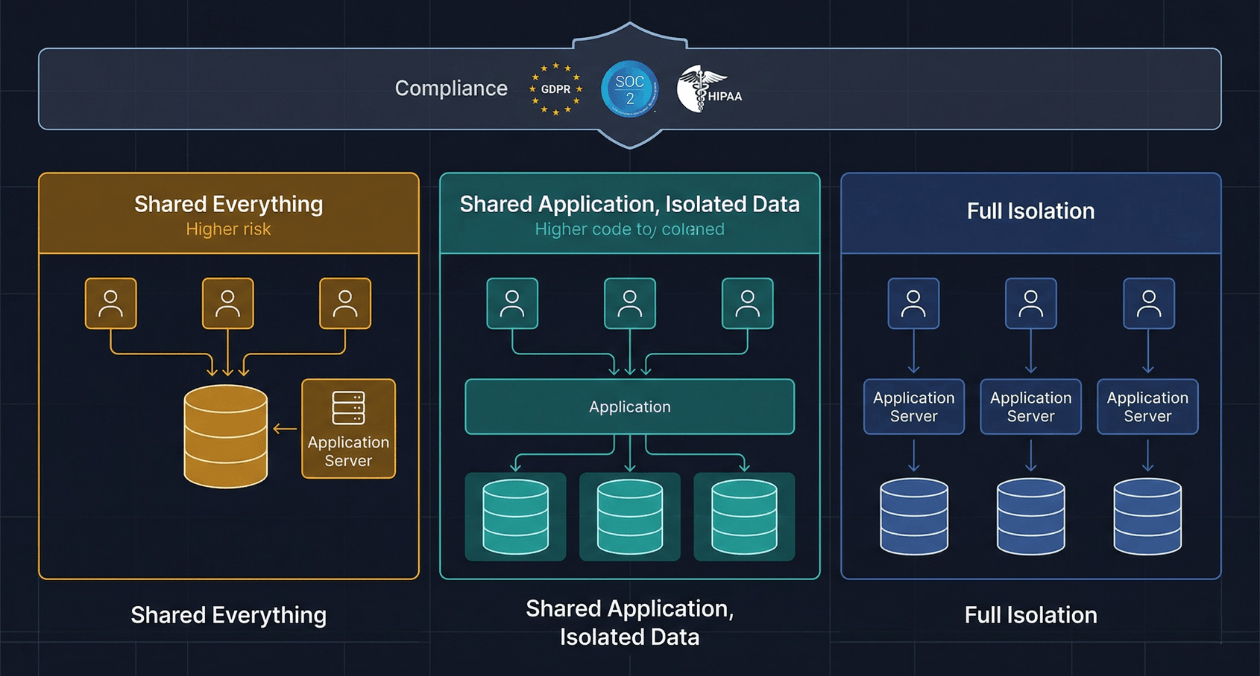

Multi-Tenant SaaS Architecture: Building for Isolation, Scale, and Compliance

Every SaaS product eventually faces the same architectural inflection point. The first version was built for a handful of customers. Data lived in a...

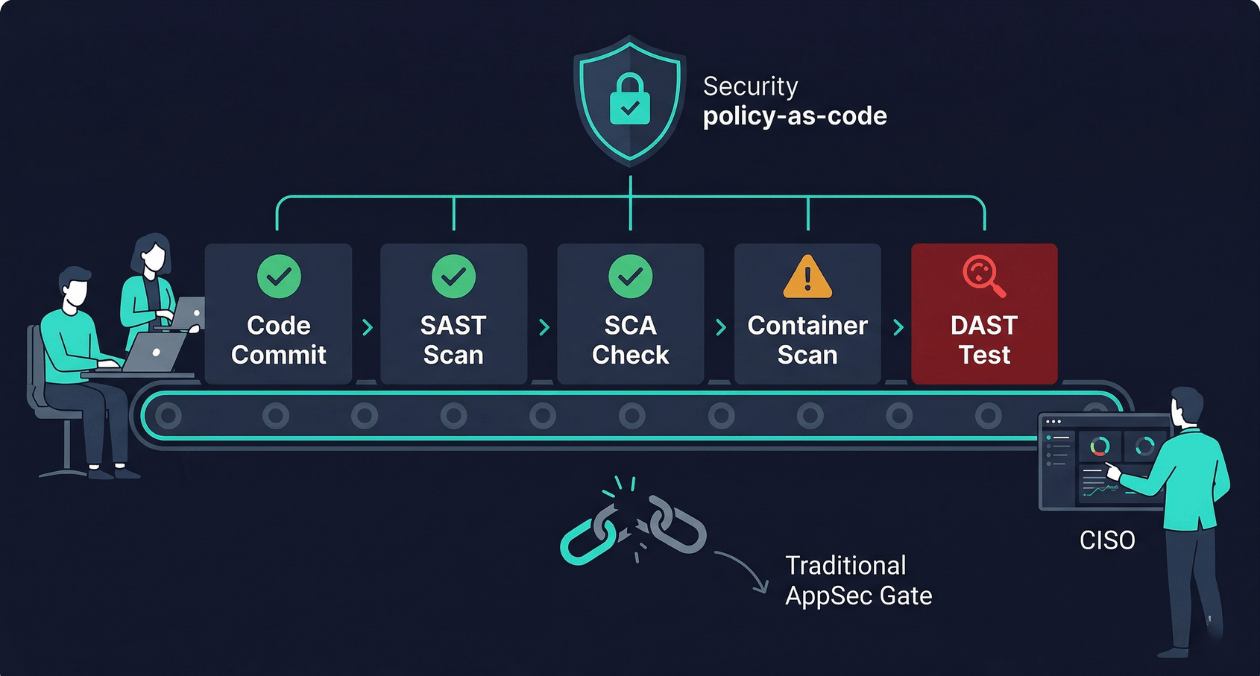

Security as Code: Embedding AppSec Into CI/CD Without Slowing Releases

There is a particular kind of friction that security teams and engineering teams share without ever quite resolving. Engineering wants to ship fast. Security...

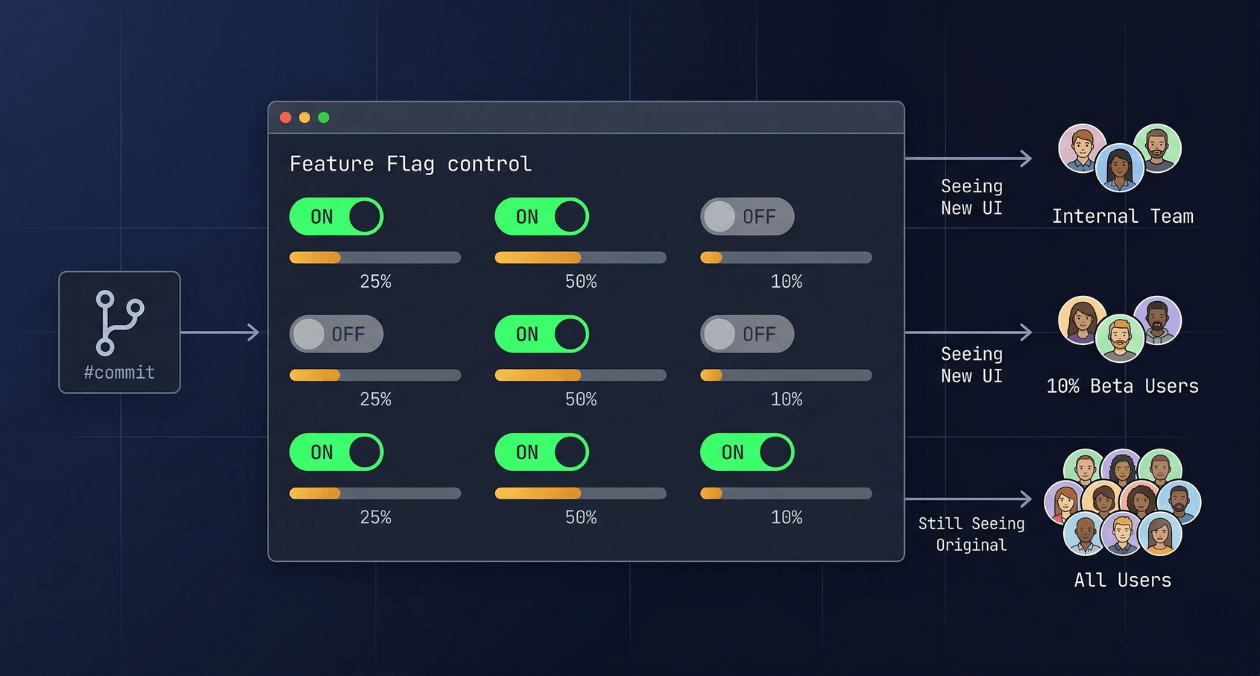

Feature Flags in Production: Progressive Delivery Without the Risk

The deploy button used to mean something definitive. You shipped code, users got the new version, and if something broke you scrambled to roll...