TABLE OF CONTENTS

End-to-End Encryption in Modern Applications: Implementation Without the Mistakes

Most applications that describe themselves as encrypted are not end-to-end encrypted. They encrypt data in transit using TLS and encrypt data at rest using a storage-layer key managed by the cloud provider. Both are important and neither is E2EE. In a genuine end-to-end encrypted system, the server processes ciphertext it cannot decrypt. Only the communicating parties hold the keys, and a breach of the server infrastructure exposes no readable message content.

The gap between these two models matters enormously when the threat model includes a malicious insider, a compromised cloud account, or a government subpoena served to the infrastructure provider. Signal, WhatsApp, and ProtonMail built their user trust on this distinction. As engineers building applications that handle sensitive personal, financial, or healthcare data, understanding how E2EE is actually implemented, what it costs operationally, and where it breaks down in practice is becoming a baseline competency rather than a specialist niche. For teams at Askan working on security-critical application architecture, E2EE implementation decisions come up regularly as product teams handle increasing volumes of sensitive user data.

What End-to-End Encryption Actually Means

End-to-end encryption means that data is encrypted on the sender’s device and decrypted only on the recipient’s device. The server in the middle, whether that is a cloud backend, a message relay, or a sync service, handles encrypted data that it cannot read. The encryption keys never leave the endpoint devices in plaintext form.

This is different from transport encryption, which protects data between a client and a server but leaves the server holding plaintext once the TLS connection terminates. It is also different from server-side encryption at rest, where the server encrypts data before writing it to disk but holds or has access to the keys used for encryption. In both of those models, a server compromise or a key disclosure means your data is readable. In a correctly implemented E2EE model, it does not.

| Encryption Model | Who Can Read the Data |

|---|---|

| TLS only (in transit) | Your server in plaintext after TLS terminates; any server-side breach exposes content |

| Encryption at rest (server-managed keys) | Your server via the encryption key; infrastructure breach or key exposure compromises content |

| Client-side encryption (keys on server) | Your server still holds keys; same risk as above despite the client performing the encryption operation |

| True E2EE (keys never leave endpoints) | Only the sender and intended recipient; server compromise exposes no readable content |

That table is worth studying carefully before designing anything. A common mistake in early E2EE implementations is to have the client perform the encryption operation but then transmit the key to the server for storage. That is client-side encryption with server-managed keys, which defeats the purpose entirely.

The Signal Protocol: Why It Became the Industry Reference

The Signal Protocol, developed by Open Whisper Systems and now maintained by the Signal Foundation, is the most widely deployed E2EE messaging protocol in existence. It underpins Signal, WhatsApp, Google Messages, and Meta’s Messenger encrypted mode. Understanding its design is the starting point for any serious E2EE implementation because it solves problems that a naive implementation will miss. The Signal Protocol documentation is publicly available and is the most readable technical reference for the cryptographic primitives involved.

The protocol achieves several properties simultaneously that make it unusually strong. Forward secrecy means that a compromise of the current session key does not expose past messages, because each message is encrypted with a new key derived from a ratcheting mechanism that advances with every message sent and received. Break-in recovery means that if a session key is compromised at a point in time, future messages re-establish security automatically as the ratchet continues to advance. Deniability means that anyone can generate a transcript that looks like a valid Signal conversation, which means a leaked message transcript cannot be cryptographically proven to be authentic.

These properties are the result of composing three mechanisms: the X3DH (Extended Triple Diffie-Hellman) key agreement protocol for session establishment, the Double Ratchet Algorithm for ongoing message encryption with forward secrecy, and the Sealed Sender mechanism to protect message metadata.

End-to-End Encryption Implementation Guide: Patterns, Key Management, and Common Mistakes to Avoid

Key Management: Where Most E2EE Implementations Actually Fail

The cryptographic primitives in most E2EE implementations are not the source of failure. Elliptic curve Diffie-Hellman key exchange, AES-256-GCM for symmetric encryption, and HKDF for key derivation are well-understood and well-tested in mature libraries. The failure almost always happens in key management: how keys are generated, stored, distributed, rotated, and recovered.

Key Generation on the Device

Cryptographic key pairs must be generated using a cryptographically secure random number generator on the user’s device, not on the server. A server that generates key pairs and sends them to clients is a server that knows the private keys, which is the same as no E2EE. On mobile platforms, iOS provides SecRandomCopyBytes and Android provides java.security.SecureRandom, both of which draw from the device’s hardware-backed entropy source. On the web, the Web Crypto API’s crypto.getRandomValues is the correct interface, available in all modern browsers.

Private Key Storage

Private keys must be stored in hardware-backed secure storage where available. On iOS, keys stored in the Secure Enclave are protected by the device’s dedicated security chip and cannot be exported even by the application that created them. On Android, keys stored in the Android Keystore with hardware-backed attestation provide equivalent protection on devices with a dedicated hardware security module. On the web platform, there is no equivalent to hardware-backed key storage; keys stored in IndexedDB are protected only by the browser’s process isolation and the operating system’s user account protections.

The absence of hardware-backed key storage on web is one of the reasons that browser-based E2EE applications have a weaker security story than native applications for the same threat model. Teams building E2EE for a web-first audience should document this limitation honestly and consider whether the security level is sufficient for their specific threat model.

Key Backup and Recovery

Key backup is the most difficult design problem in E2EE. If a user’s private keys exist only on their device and the device is lost, stolen, or factory-reset, all their encrypted data becomes permanently unreadable. For a messaging application this means losing message history. For a document management application it means losing access to documents. Neither outcome is acceptable to most users.

The standard approaches to key backup create tension with the E2EE guarantee in different ways. Encrypted key backup to the server, where the key material is encrypted with a passphrase or a recovery code that only the user knows before being stored server-side, is the approach used by Signal and WhatsApp. The server stores ciphertext it cannot decrypt. The user’s backup passphrase is the single point of recovery. If the passphrase is lost, the backup is unrecoverable. This is a genuine security property rather than a limitation, but it requires users to understand and reliably retain their recovery credentials, which consumer applications struggle to achieve without introducing support overhead.

Implementing E2EE for Messages: The Steps in Practice

A minimal but correct E2EE messaging implementation requires the following steps, each of which must be implemented without shortcuts.

- Each user generates a long-term identity key pair (Curve25519 or Ed25519) on their device during account creation. The public key is uploaded to the server. The private key never leaves the device.

- Each user generates a set of one-time prekeys, short-term key pairs that are consumed one per session establishment. The public prekeys are uploaded to the server in a batch. The private prekeys are stored locally.

- When user A wants to send the first message to user B, user A fetches user B’s public identity key and one of their public prekeys from the server. User A performs the X3DH key agreement to derive a shared secret. User B’s prekey is consumed and cannot be reused.

- User A encrypts the message using the shared secret as the initial input to the Double Ratchet Algorithm, producing ciphertext plus a ratchet state. User A sends the ciphertext, their own public identity key, and the ephemeral public key from the X3DH exchange to the server, which relays it to user B.

- User B performs the X3DH key agreement on their side using their private keys and user A’s public keys from the message header, derives the same shared secret, and initialises their Double Ratchet state. User B can now decrypt the message.

- All subsequent messages in the session advance the Double Ratchet, deriving a new encryption key for each message. Neither party can decrypt messages from before their first message in the session even if their current key material is later compromised.

This sequence is non-trivial to implement correctly from scratch. Teams that do not have dedicated cryptography engineering experience should use an existing implementation of the Signal Protocol rather than building from primitives. The libsignal library maintained by the Signal Foundation is available for Java, Swift, TypeScript, and Rust, and is the reference implementation that WhatsApp and Signal use in production.

E2EE Beyond Messaging: Files, Documents, and Sync

Messaging is the most common E2EE use case but not the only one. Document management, file sync, and collaborative editing all have E2EE variants that handle a different set of design challenges.

In a messaging system, each conversation has a small number of participants whose keys are known at session establishment time. In a document system, a file may need to be shared with a variable set of users who may join and leave over time, and the same file may need to be accessible from multiple devices belonging to the same user. The key management problem is more complex.

The standard approach for encrypted file storage is to encrypt each file with a randomly generated symmetric key, called the content encryption key or CEK, and then encrypt the CEK separately for each authorised user using their public key. The server stores the encrypted file content and the encrypted CEK for each user. No user’s private key touches the server. When a new user is granted access to a file, the sharing user decrypts the CEK with their own private key, re-encrypts it with the new user’s public key, and uploads the new encrypted CEK. When a user’s access is revoked, their encrypted CEK is deleted and the file is re-encrypted with a new CEK that is distributed only to the remaining authorised users.

| Operation | How It Works Without Exposing Keys to the Server |

|---|---|

| File upload | Client generates a random CEK, encrypts file content with CEK, encrypts CEK with owner’s public key, uploads both ciphertexts |

| File download | Client fetches encrypted CEK and encrypted file, decrypts CEK with own private key, decrypts file content with CEK |

| Share with another user | Sharer decrypts CEK locally, re-encrypts it with recipient’s public key, uploads new encrypted CEK for recipient |

| Revoke access | Delete revoked user’s encrypted CEK, re-encrypt file with new CEK, distribute new encrypted CEK to remaining users |

Proton Drive and Tresorit are the most mature examples of this pattern in consumer products. Both publish technical documentation of their encryption architecture that is worth reviewing. Proton’s security model documentation covers the key hierarchy, the sharing mechanism, and the server-side data model in enough detail to inform the design of a similar system without copying their specific implementation.

End-to-End Encryption Implementation Guide: Patterns, Key Management, and Common Mistakes to Avoid

Common Implementation Mistakes and How to Avoid Them

E2EE implementations fail in predictable ways. The following mistakes appear repeatedly across security audits of applications that claim end-to-end encryption.

Encrypting Metadata but Not Content, or Content but Not Metadata

Message content encrypted with a strong E2EE protocol still leaks information if message metadata is transmitted in plaintext. Knowing that user A sent 47 messages to user B between 11 pm and 2 am on a specific date is meaningful even without knowing the content of those messages. A complete E2EE implementation considers which metadata fields need protection and uses techniques such as sealed sender (hiding the message origin from the relay server) or padding (obscuring message length patterns) where the metadata sensitivity justifies the overhead.

Using Deterministic Nonces with Symmetric Encryption

AES-GCM requires a unique nonce for every encryption operation using the same key. Reusing a nonce with the same key under AES-GCM is catastrophic: it exposes both the keystream and the authentication tag, allowing an attacker to forge ciphertexts. The correct practice is to generate nonces randomly using a CSPRNG and to treat the nonce as a system-level constraint that must be enforced, not a suggestion. The Double Ratchet Algorithm handles this correctly by deriving a new key for each message, but implementations that roll their own symmetric encryption layer frequently get nonce management wrong.

Not Verifying Key Authenticity

Diffie-Hellman key exchange is vulnerable to man-in-the-middle attacks if the authenticity of the public keys exchanged is not verified. If the server can substitute its own public key for user B’s public key when user A fetches it, user A establishes a session with the server rather than with user B. The solution is a key verification mechanism: Signal’s safety numbers, WhatsApp’s security codes, and PGP’s web of trust are all approaches to this problem. For consumer applications, the UX challenge is getting users to perform key verification without treating it as an optional step that nobody does.

Storing Plaintext in Logs or Analytics

A system that correctly encrypts all user data and then logs decrypted content to a centralised logging platform, sends decrypted message previews to a push notification service, or passes cleartext through an analytics pipeline has defeated its own E2EE implementation at the application layer. Every data flow that touches user content must be audited to verify that plaintext does not exit the encryption boundary through an ancillary system. Push notifications are a particularly common leak vector: a push notification that carries the message preview text sends that preview through Apple’s or Google’s push infrastructure in plaintext, regardless of how strong the E2EE implementation is for the message transport itself.

Regulatory Considerations for E2EE Applications

End-to-end encryption creates a specific regulatory tension in several jurisdictions. Regulators in the EU, UK, India, and Australia have at various times proposed or implemented requirements that would require messaging platforms to provide access to message content for law enforcement purposes, which is technically incompatible with strong E2EE. Engineers building E2EE applications need to be aware of the regulatory environment in the markets they operate in and should work with legal counsel on whether client-side scanning requirements, key escrow mandates, or other technical measures apply to their product category.

For business applications handling employee communications or customer data, E2EE implementation also interacts with data retention requirements. A GDPR right-to-erasure request requires that all personal data belonging to a data subject is deleted. In an E2EE system where the server holds ciphertext but not keys, fulfilling an erasure request can be achieved by deleting the encrypted content and destroying the key material, which renders the content permanently unreadable. This approach, called cryptographic erasure, is accepted as an equivalent to deletion in most data protection frameworks, but legal confirmation for the specific jurisdiction is necessary before relying on it.

Testing an E2EE Implementation

Standard application testing approaches are insufficient for E2EE implementations. A test that verifies a message is sent and received correctly does not verify that the server cannot decrypt it. Testing the encryption boundary requires specific approaches.

The most direct test for server-side opacity is an adversarial database inspection test: after a message is sent through the system, inspect the raw data stored in the database and any logs generated during the request. If any plaintext content from the message appears anywhere in those stores, the E2EE boundary has a leak. This test should be part of the automated test suite and should run on every build, not just during security reviews.

Formal cryptographic audits by specialist security firms are appropriate before launching an E2EE application that handles sensitive data. The audit should cover the key generation and storage implementation, the protocol implementation against the specification, the network traffic under a proxy to verify no unexpected plaintext is transmitted, and the backup and recovery flow. NCC Group, Trail of Bits, and Cure53 are among the firms that have published E2EE audit reports, and reviewing their past public reports on similar applications gives a useful picture of what reviewers look for. Askan’s security engineering practice includes architecture review for E2EE implementations as part of pre-launch security assessments for teams that need specialist input before releasing a security-critical product.

Most popular pages

From Prototype to Production: The Engineering Checklist That Actually Matters

Prototypes lie. They perform well in demos because they are not doing any of the work that production systems actually do. There is no...

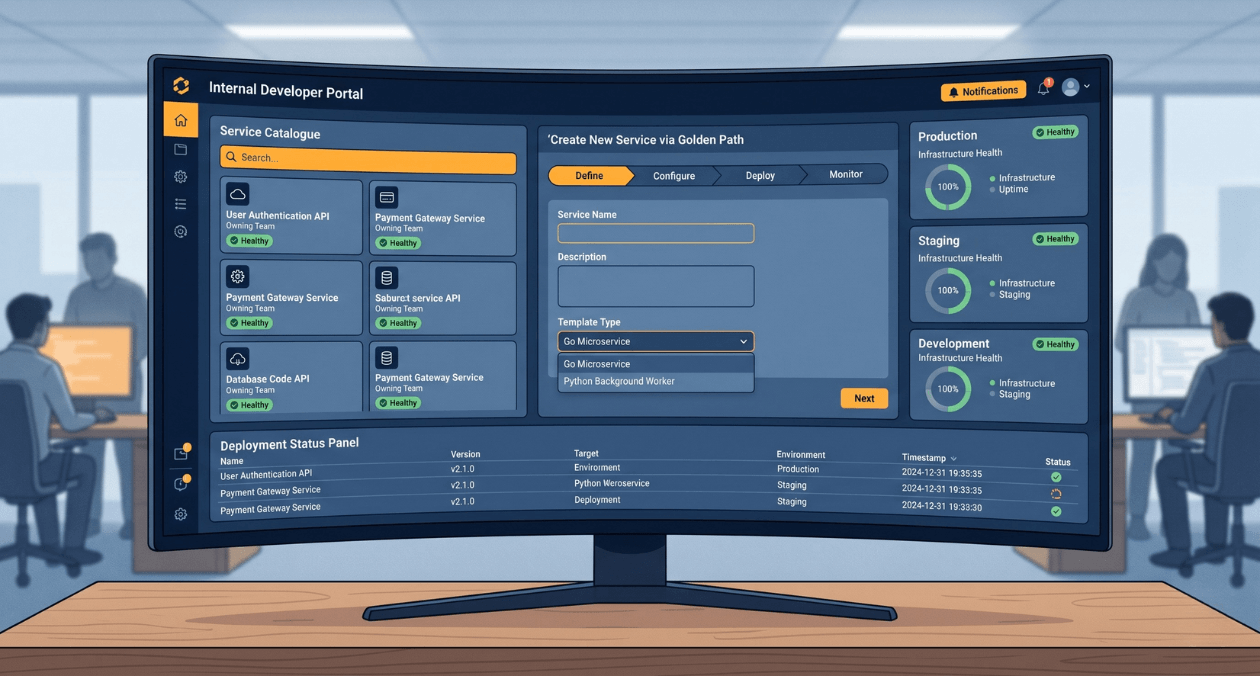

Building a Developer Experience (DX) Platform: From Golden Paths to Self-Service Infrastructure

There is a measurement problem at the heart of platform engineering. The people who benefit most from a well-built internal developer platform are often...

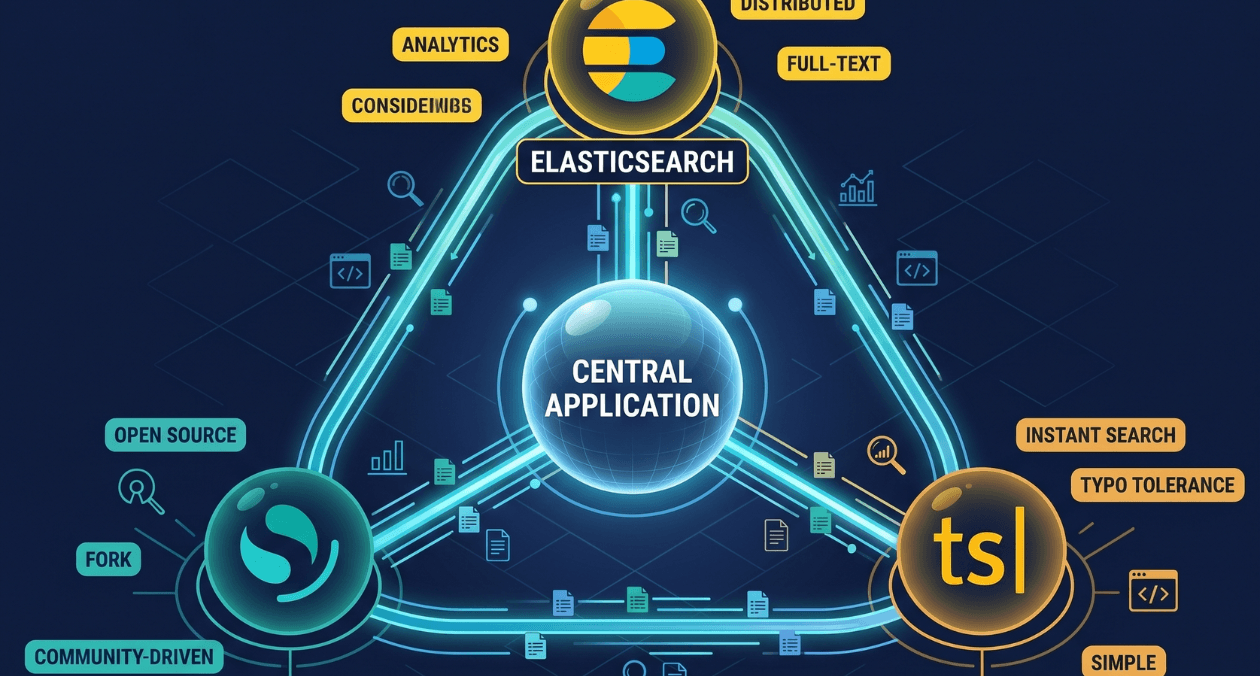

Search Infrastructure for Applications: Elasticsearch vs OpenSearch vs Typesense

Search is one of those features that seems straightforward until you try to build it properly. A basic LIKE query handles small datasets. The...