TABLE OF CONTENTS

Infrastructure as Code Maturity: From Basic Terraform to Policy-Driven Automation

Most engineering teams that have adopted infrastructure as code are not where they think they are. They have Terraform files in a repository. They can provision a VPC and a handful of EC2 instances without clicking through a console. The infrastructure looks like code in the sense that it lives in a .tf file, but it behaves like a shared Google Doc: everyone edits it directly, the state file is stored locally on someone’s laptop, and a plan that has not been reviewed in four months is about to be applied to production by an engineer who joined three weeks ago.

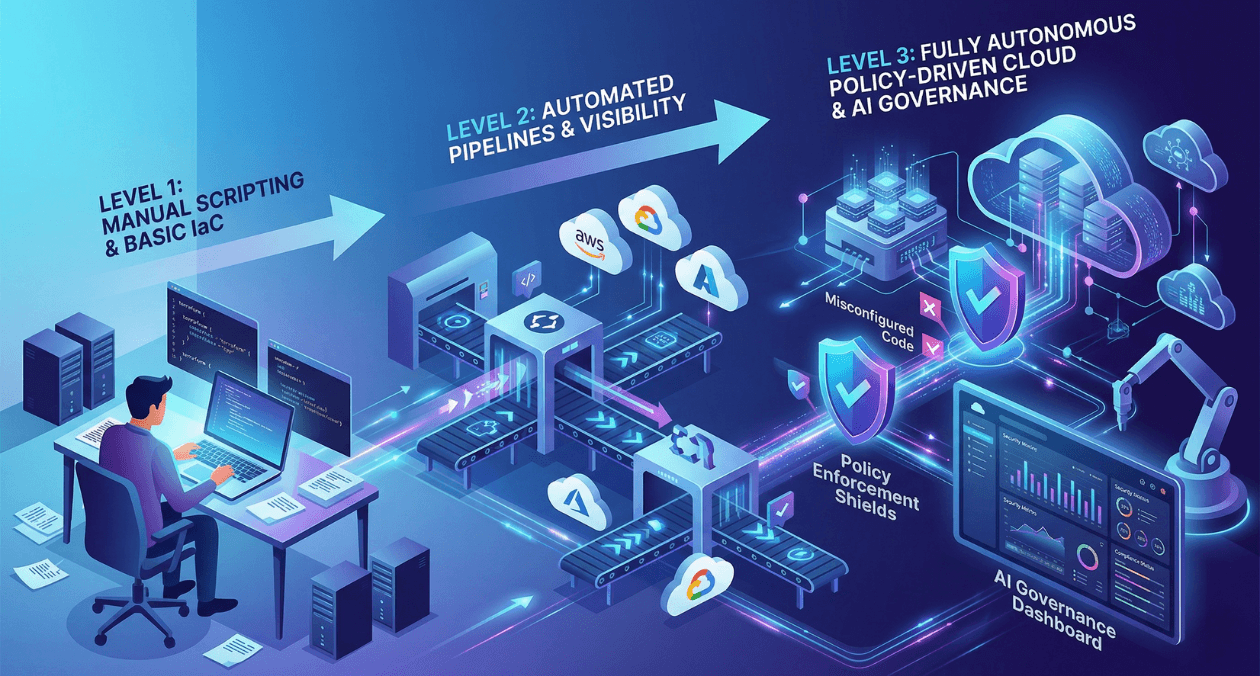

Infrastructure as code is not a binary condition. It is a maturity spectrum with clearly distinguishable levels, each of which delivers a different category of operational benefit and carries a different category of risk. Teams that understand where they actually are on that spectrum, rather than where they assume they are, can make targeted investments that move them forward without the disruption of attempting to jump too many levels at once.

This article maps the IaC maturity spectrum from initial adoption through policy-driven automation, covering what distinguishes each level, the specific practices and tooling that characterise it, the failure modes that indicate a team has plateaued below where it needs to be, and the concrete steps that move a team from one level to the next. Terraform is used as the primary reference implementation throughout because it remains the dominant IaC tool across cloud providers, but the framework applies equally to Pulumi, AWS CDK, and OpenTofu.

The Five Levels of IaC Maturity

The maturity model presented here is not prescriptive in the sense that every team must reach Level 5. The appropriate target level depends on the organisation’s scale, its compliance requirements, and the size of its platform engineering function. What is important is being honest about which level a team actually operates at, because the failure modes at each level are specific and the remediation is specific to the level, not generic.

| Maturity Level | Defining Characteristic |

|---|---|

| Level 1: Manual Provisioning | Infrastructure is created and modified through cloud consoles, CLI commands, or runbooks. No version history of infrastructure state exists. |

| Level 2: Basic IaC Scripts | Terraform or equivalent files exist and are used to provision infrastructure, but state is managed inconsistently, modules are not used, and applies happen locally from developer machines. |

| Level 3: Modular and Version-Controlled | Infrastructure is organised into reusable modules, stored in version control with branching discipline, and state is stored remotely in a shared backend with locking. |

| Level 4: Pipeline-Driven Automation | All infrastructure changes flow through a CI/CD pipeline. Plans are reviewed as part of pull requests. Applies are automated on merge and never run from local machines. |

| Level 5: Policy-Driven Self-Service | Security and compliance policies are encoded as code and enforced automatically in the pipeline. Application teams can provision standard infrastructure through self-service without involving the platform team for every request. |

Most teams that believe they are operating at Level 3 are actually at Level 2 with some Level 3 practices mixed in. The clearest diagnostic question is: when was the last time a production infrastructure change was applied from a developer’s local machine without going through a pull request? If the answer is recent, the team is at Level 2 regardless of how many modules exist in the repository.

Level 1 to Level 2: Writing Infrastructure as Code for the First Time

The transition from manual provisioning to basic IaC is the step where most teams begin, and it is also the step where foundational habits are either established or missed. A team that starts writing Terraform without addressing state management, provider versioning, and basic directory structure will accumulate technical debt in the IaC layer that is just as painful to unwind as application-level technical debt.

State Management from Day One

Terraform state is the file that maps your declared resources to the real infrastructure they represent in the cloud provider. By default, Terraform stores this file locally as terraform.tfstate. Local state is incompatible with team collaboration because it cannot be shared safely: two engineers applying changes simultaneously will corrupt the state, and the state file living on a single laptop creates a single point of failure that makes disaster recovery interesting in the wrong way.

Configuring a remote backend is the first non-negotiable practice of Level 2. AWS S3 with a DynamoDB lock table, Google Cloud Storage, or Terraform Cloud all serve as remote backends. The backend configuration takes fewer than ten lines of HCL and takes under an hour to set up correctly. Every hour spent not doing this is borrowed time against the moment when local state causes a production incident.

Provider and Module Version Pinning

Terraform provider versions change regularly and occasionally include breaking changes. A configuration that runs correctly with the AWS provider at version 5.20 may fail with version 5.30 if a resource argument was renamed or a default behaviour was changed. Pinning provider versions in a required providers block and committing the .terraform.lock.hcl file to version control ensures that every team member and every CI pipeline uses exactly the same provider versions. Unpinned providers are a source of the class of failure where infrastructure applies work on one machine and fail on another for no apparent reason.

Level 2 to Level 3: Modules, Structure, and Remote State

The transition from basic IaC scripts to a modular, well-structured codebase is where most teams spend the longest time and where the investment pays the most consistent dividends. A Level 2 codebase is typically a flat directory of .tf files where every resource is declared at the root level. Adding a new environment means copying the entire directory and changing a handful of values, which creates N nearly-identical copies of the same configuration that diverge over time as changes are applied to some copies and not others.

Building Reusable Modules

A Terraform module is a directory containing a set of .tf files that accept input variables and produce output values. A well-designed module encapsulates a logical unit of infrastructure, such as a VPC with its subnets and routing tables, an ECS cluster with its IAM roles and security groups, or a PostgreSQL RDS instance with its parameter group and subnet group, and exposes only the inputs that should vary between uses.

The module design principle that prevents the most future pain is: a module should have a small, stable interface. Every input variable you add to a module is a contract that callers depend on. Adding variables is easy and backwards-compatible. Removing or renaming variables requires updating every caller. Design modules around the decisions that genuinely vary between environments and hardcode or default the decisions that should be consistent everywhere.

Directory Structure for Multi-Environment Management

A structure that scales well for most organisations separates the codebase into three layers: a modules directory containing reusable modules, a live directory containing environment-specific configurations that call those modules, and a global directory containing account-level resources that exist once regardless of environment. Within the live directory, each environment (dev, staging, production) has its own subdirectory with its own remote state, ensuring that a change to the dev environment has no possible effect on the production state.

| Directory Layer | What Lives Here |

|---|---|

| modules/ | Reusable module definitions: vpc, ecs-cluster, rds-postgres, s3-bucket, etc. |

| live/dev/ | Dev environment root configurations that call modules with dev-specific variable values |

| live/staging/ | Staging environment root configurations with staging-specific values |

| live/production/ | Production environment root configurations; requires extra access controls |

| global/ | Account-level resources: IAM roles, DNS zones, ECR repositories, S3 backend buckets |

Terragrunt for DRY Configuration

Teams that manage more than two or three environments often reach a point where the boilerplate in each environment’s configuration, the backend configuration, the provider configuration, and the module version pin, is repeated verbatim across every environment directory. Terragrunt is a thin wrapper around Terraform that provides inheritance for common configuration blocks, allowing a single root terragrunt.hcl to define the backend configuration pattern and provider settings that all child configurations inherit. This eliminates the copy-paste maintenance burden without changing how Terraform itself works.

Want a structured review of your current IaC setup and a roadmap to the next maturity level?

Level 3 to Level 4: Automating Infrastructure Through CI/CD

The gap between Level 3 and Level 4 is the gap between infrastructure as code as a documentation tool and infrastructure as code as a delivery mechanism. A Level 3 team has good modules and good structure, but changes still flow through individual engineers running terraform apply from their own terminals. This means that the actual infrastructure state depends on which engineer ran the last apply, from which directory, with which local environment variables set, and whether they ran a plan before applying.

Level 4 eliminates this variability by making the CI/CD pipeline the only path through which infrastructure changes reach any environment. An engineer opens a pull request with a configuration change, the pipeline runs terraform plan and posts the output as a PR comment, a reviewer approves the plan output alongside the code, and the merge triggers terraform apply in an automated job that runs with consistent credentials and a consistent working directory.

Designing the Terraform CI Pipeline

A production-ready Terraform CI pipeline has four stages that run in sequence on every pull request.

- Format and validate: terraform fmt -check confirms that all files are consistently formatted, and terraform validate confirms that the configuration is syntactically valid and internally consistent without making any API calls.

- Security and policy scan: A tool like Checkov, tfsec, or Trivy scans the configuration for common security misconfigurations such as publicly accessible S3 buckets, overly permissive security groups, and unencrypted storage volumes. Findings above the configured severity threshold fail the pipeline.

- Plan: terraform plan runs against the target environment and produces the proposed change set. The output is posted as a pull request comment so reviewers can see exactly which resources will be created, modified, or destroyed before approving the merge.

- Apply: On merge to the main branch, terraform apply -auto-approve runs using the same plan output generated in stage three. This prevents the plan-apply drift that occurs when apply is run with a fresh plan that may differ from the reviewed plan.

Credentials Management in the Pipeline

The CI pipeline needs credentials to interact with your cloud provider. Storing long-lived access keys as CI secrets is the anti-pattern that security teams consistently flag in IaC pipeline reviews. The correct approach is OIDC federation, where the CI provider (GitHub Actions, GitLab CI, or similar) authenticates to the cloud provider using a short-lived token issued by the CI platform’s OIDC endpoint. The cloud provider trusts tokens from that OIDC endpoint for a specific role, and the pipeline assumes that role without any long-lived secret being stored anywhere.

GitHub Actions with AWS, for example, uses the aws-actions/configure-aws-credentials action with the role-to-assume parameter set to a role ARN. The role’s trust policy allows assumption by the GitHub OIDC provider for tokens that match specific conditions such as the repository name and the branch. The pipeline never touches an access key. This configuration takes under an hour to set up and eliminates an entire category of credential exposure risk.

Terraform Cloud and Atlantis as Managed Alternatives

Building and maintaining a Terraform CI pipeline from scratch requires ongoing investment. Two managed alternatives reduce that investment significantly. Terraform Cloud provides a hosted execution environment with built-in state management, run history, team access controls, and a policy framework called Sentinel. Atlantis is an open-source server that runs inside your own infrastructure, listens for pull request webhooks, and orchestrates plan and apply workflows automatically. Both are mature options and the choice between them is primarily a question of whether you prefer a managed service or self-hosted control over the execution environment.

Terraform vs Pulumi: Choosing the Right Tool for Your Team

Terraform’s HCL configuration language is purpose-built for describing infrastructure and is familiar to most infrastructure engineers. Its main limitation is that HCL is not a general-purpose programming language: complex conditional logic, dynamic resource generation based on external data sources, and testing are all more awkward in HCL than they would be in a real programming language.

Pulumi uses actual programming languages, TypeScript, Python, Go, and C# among others, to define infrastructure. This means engineers who are comfortable in those languages can apply all the abstractions, testing frameworks, and tooling they already use to their infrastructure code. A Pulumi stack that dynamically provisions different infrastructure configurations based on the output of an API call is straightforward to write in TypeScript and genuinely awkward to write in HCL.

| Criterion | Terraform / OpenTofu | Pulumi |

|---|---|---|

| Configuration language | HCL (purpose-built, readable) | TypeScript, Python, Go, C# (general purpose) |

| Learning curve for infra engineers | Lower (HCL is simple) | Higher (requires language proficiency) |

| Learning curve for app developers | Higher (new language) | Lower (uses familiar languages) |

| Testing support | Limited (terratest, check blocks) | Strong (native unit and integration test frameworks) |

| State management | Remote backend required | Pulumi Cloud or self-managed backend |

| Provider ecosystem | Very large (Terraform Registry) | Growing (shares Terraform providers via bridge) |

OpenTofu, the open-source fork of Terraform maintained by the Linux Foundation after HashiCorp’s licence change, is a drop-in replacement for Terraform that uses the same HCL syntax, the same provider ecosystem, and the same state file format. Teams concerned about Terraform’s BSL licence or seeking a community-governed alternative can migrate to OpenTofu without changing any configuration files.

Want a structured review of your current IaC setup and a roadmap to the next maturity level?

Level 4 to Level 5: Policy as Code and Self-Service Infrastructure

Level 5 is where infrastructure as code transitions from a tool that platform engineers use to provision infrastructure into a platform that application engineers use to provision their own infrastructure within guardrails that the platform team defines. This transition is the difference between a platform team that is a bottleneck for every new piece of infrastructure and a platform team that multiplies the productivity of the entire engineering organisation.

Two capabilities enable this transition: policy as code that enforces standards automatically without requiring manual review of every change, and a self-service catalogue that exposes pre-approved infrastructure patterns as simple interfaces that application teams can use without deep Terraform knowledge.

Policy as Code with OPA and Sentinel

Open Policy Agent (OPA) with Conftest and HashiCorp Sentinel are the two dominant policy-as-code implementations for Terraform pipelines. Both work by evaluating Terraform plan output against a set of rules before apply is allowed to proceed.

An OPA policy that prevents any S3 bucket from being created with public access enabled is a dozen lines of Rego. A policy that requires all EC2 instances to use only approved AMIs and to be tagged with specific mandatory tags is equally concise. These policies run in the CI pipeline automatically on every plan output, which means a developer who accidentally writes a configuration that would create a publicly accessible database gets immediate feedback in their pull request rather than a security review comment three days later.

| Policy Tool | How It Integrates with Terraform |

|---|---|

| OPA with Conftest | Reads the JSON output of terraform show -json and evaluates Rego policies against the planned resource graph; open-source and self-hosted |

| HashiCorp Sentinel | Integrated natively into Terraform Cloud and Terraform Enterprise; policies are written in Sentinel’s own policy language and enforced as a pipeline gate before every apply |

| Checkov | Scans Terraform source files and plan output for security misconfigurations against a library of built-in checks; fastest to get started, less flexible for custom organisational policies |

The Internal Developer Platform for Infrastructure

Self-service infrastructure at Level 5 means application teams can provision a new service’s full infrastructure, including compute, database, networking, and IAM roles, by filling out a form or opening a pull request to a service catalogue repository, without requiring a platform engineer to write or review any Terraform code for that specific request.

The implementation typically involves a catalogue of pre-approved infrastructure templates, each representing a common pattern such as a containerised web service with an RDS database, a background worker with an SQS queue, or a static site with a CloudFront distribution. Application teams select a template, provide values for the parameters that vary between services (service name, instance size, database engine version), and submit. The platform team’s policies enforce that the resulting Terraform configuration meets all security and compliance requirements before the infrastructure is provisioned.

Tools like Backstage (the CNCF developer portal framework), Port, and Cortex provide the catalogue front-end that surfaces these templates to application teams without requiring them to understand the underlying Terraform. Backstage’s software templates feature generates a pull request to the infrastructure repository with the parameterised configuration already populated, which then flows through the standard Level 4 pipeline with all policy checks applied. The Backstage documentation on software templates covers the template authoring model in detail and is the most practical starting point for teams building a self-service infrastructure catalogue.

Testing Infrastructure Code

Testing infrastructure code is meaningfully different from testing application code and is also more often skipped. The argument that infrastructure testing is unnecessary because you can see the results in the cloud console is approximately as convincing as arguing that application testing is unnecessary because you can run the application and click around. Infrastructure code has logic, it has conditional branches, it has variable inputs that produce different outputs, and it has failure modes that are embarrassing to discover in production.

Unit Testing with Terraform Check Blocks and Terraform Test

Terraform 1.6 introduced the terraform test command, which allows you to write test cases in .tftest.hcl files that apply a configuration to a temporary environment, assert conditions on the outputs and resource attributes, and then destroy the environment. This is the most direct form of infrastructure unit testing and is appropriate for validating that a module produces the expected resource configuration for a given set of inputs.

The check block, available since Terraform 1.5, allows you to define assertions within a configuration that are evaluated after every apply. A check block that verifies an HTTP endpoint returns a 200 status code after the load balancer is provisioned, or that a database connection can be established after the RDS instance is created, acts as a smoke test that runs automatically on every apply without requiring a separate test framework.

Integration Testing with Terratest

Terratest is a Go library that provisions real infrastructure in a real cloud environment, runs assertions against the provisioned resources using cloud SDK calls, and then tears down the infrastructure when the test completes. It is the most thorough form of infrastructure testing and also the most expensive in both execution time and cloud spend. Terratest is appropriate for validating complex modules where the interaction between resources needs to be verified against actual cloud behaviour rather than inferred from the plan output. The Terratest documentation provides comprehensive examples for testing Terraform modules across AWS, GCP, and Azure.

Drift Detection and Remediation in a Mature IaC Practice

Drift occurs when the actual state of infrastructure diverges from the state declared in Terraform configuration. The most common sources of drift are manual changes made through the cloud console during incidents, automated actions by cloud services that modify resource attributes, and changes made through other tooling that does not update the Terraform state.

In a Level 4 or Level 5 practice, drift should be rare because the pipeline is the only authorised path for infrastructure changes. But rare is not never, and detecting drift when it occurs is important for maintaining the integrity of the configuration as a reliable representation of reality. Terraform’s drifted resources feature, available in Terraform Cloud and in the output of terraform plan when the -refresh-only flag is used, identifies resources whose real attributes differ from the last known state.

Running a scheduled drift detection job that executes terraform plan -refresh-only across all environments and alerts on any detected drift gives the platform team visibility into unauthorised changes without waiting for the next intentional apply to surface them. A drift alert that fires within minutes of a manual console change creates a tight feedback loop that reinforces the practice of making all changes through the pipeline rather than eroding it gradually through accumulated exceptions.

Diagnosing Where Your Team Actually Is

The most useful output of a maturity framework is a set of diagnostic questions that produce honest answers without requiring a formal audit. The following questions map to specific level boundaries and are designed to surface the practices that are most commonly absent from teams that believe they are further along the maturity spectrum than they are.

| Diagnostic Question | What the Answer Reveals |

|---|---|

| Is all Terraform state stored in a remote backend with locking enabled? | No: the team is at Level 1 regardless of anything else |

| Are all provider versions pinned and is the lock file committed to version control? | No: the team is at Level 2 with fragile reproducibility |

| Are all production infrastructure changes made through a pull request reviewed by at least one other engineer? | No: the team is at Level 2 even with modules and remote state |

| Does the CI pipeline post a terraform plan output to every pull request before merge? | No: the team is at Level 3; reviewers cannot see what they are approving |

| Are security and compliance policies checked automatically in the pipeline without requiring manual security review? | No: the team is at Level 4 but relying on human review for policy compliance |

| Can application teams provision standard infrastructure without opening a ticket to the platform team? | No: the team is at Level 4; self-service is the Level 5 differentiator |

Working through these questions with an engineering leader who is unfamiliar with the day-to-day reality of how infrastructure changes are made frequently surfaces a gap between the official process and the actual practice. Askan’s platform engineering and cloud infrastructure services include IaC maturity assessments as a structured engagement that produces a prioritised roadmap for moving from a team’s current level to the target level, with specific tooling recommendations and implementation support for each step.

Most popular pages

LLM Integration in Production: Latency, Cost, and Reliability Patterns

Integrating a large language model into a production system looked straightforward in 2023. Call the API, parse the response, ship it. Engineers who took...

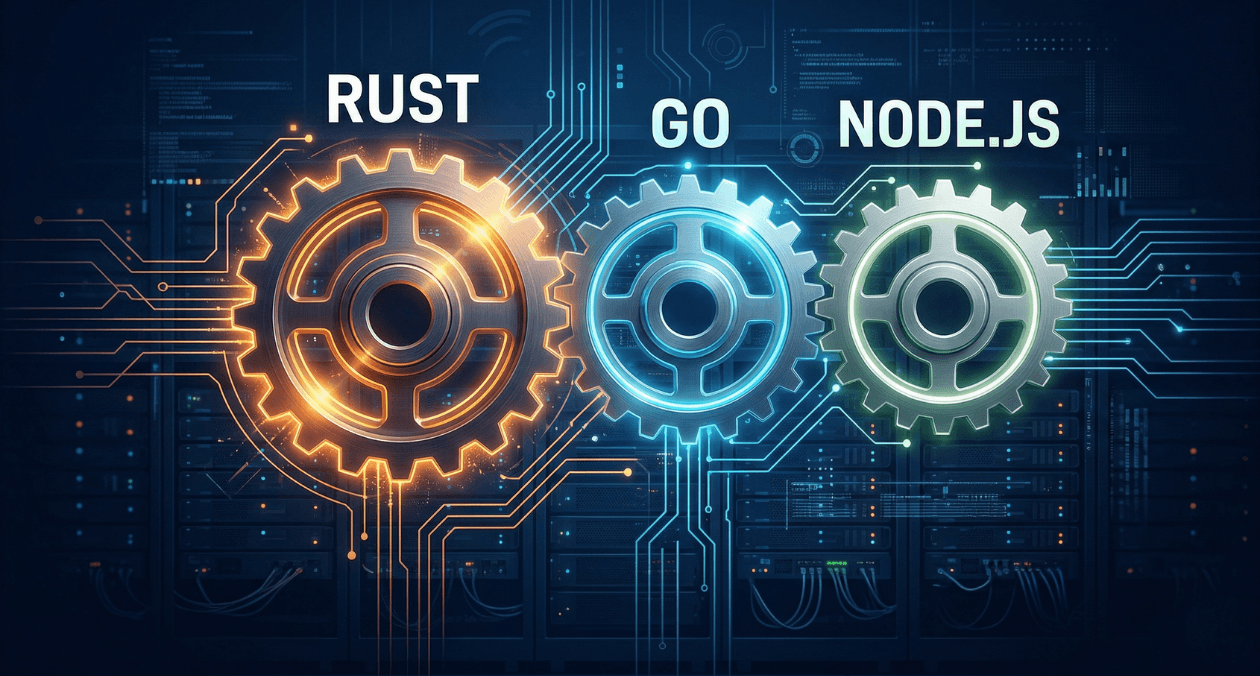

Rust for Backend Services: When to Use It and When to Stick with Go or Node

The backend language debate has never been more alive than it is in 2026. Rust has gone from a systems programming curiosity to a...

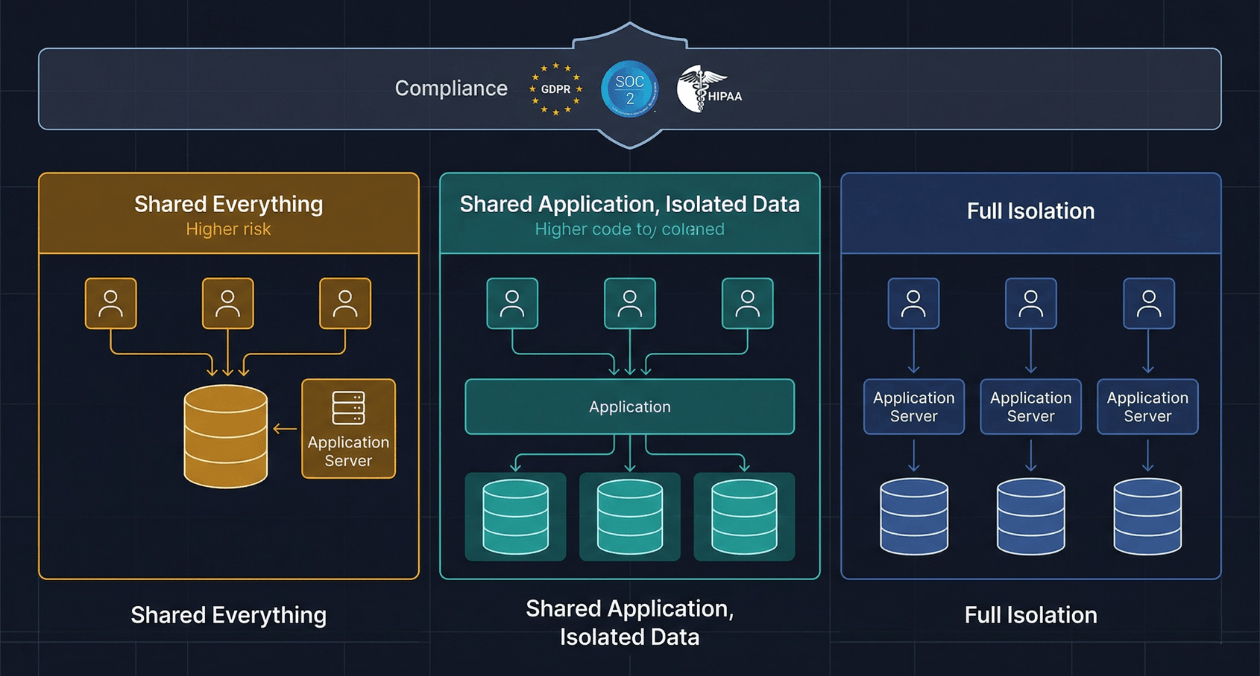

Multi-Tenant SaaS Architecture: Building for Isolation, Scale, and Compliance

Every SaaS product eventually faces the same architectural inflection point. The first version was built for a handful of customers. Data lived in a...