TABLE OF CONTENTS

WebAssembly in 2026: Performance, Use Cases and When to Use It in Production

WebAssembly has been in the conversation for nearly a decade, but 2026 is the year more engineering teams are moving it from experimental to production-grade. It is no longer just a curiosity for game developers running Unreal Engine in a browser. Platform teams are using it to run compute-intensive workloads at near-native speed on the client. Backend teams are using it as a portable, sandboxed execution layer. AI inference is running on WASM runtimes outside the browser entirely.

And yet, for most product engineering teams, WebAssembly remains misunderstood. The hype around it has oscillated between overselling it as a JavaScript killer and dismissing it as a niche tool for specialists. Neither framing is useful when you are trying to decide whether it belongs in your architecture.

This guide takes a direct look at what WebAssembly actually is, where it delivers measurable value in 2026, and the conditions under which adopting it makes sense versus when it adds complexity without proportional benefit.

What WebAssembly Is, Precisely

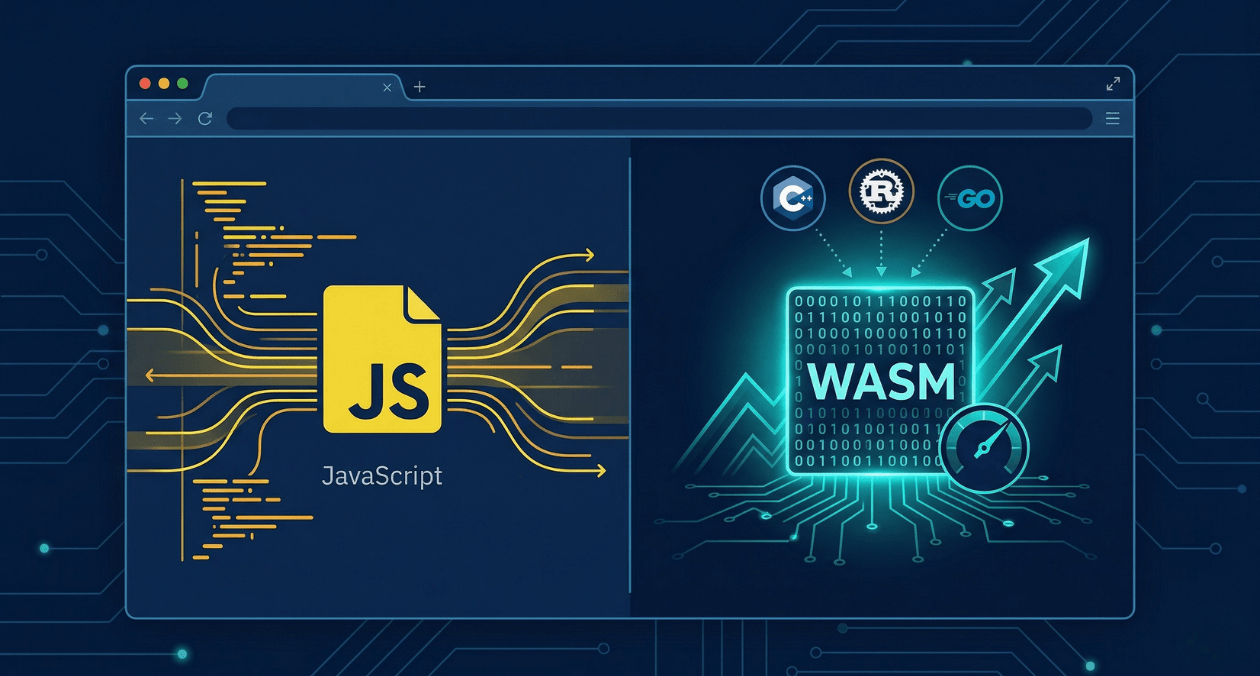

WebAssembly, abbreviated as WASM, is a binary instruction format designed as a compilation target for high-level languages. You write code in Rust, C, C++, Go, or an increasing number of other languages, compile it to the WASM binary format, and execute it in a runtime environment.

That runtime environment can be a browser, where WASM runs alongside JavaScript in a sandboxed virtual machine with access to the same Web APIs. It can also be a non-browser runtime like Wasmtime, WasmEdge, or the WASI (WebAssembly System Interface) standard, which enables WASM to run on servers, edge nodes, and embedded devices with controlled access to system resources.

The key properties that make WASM interesting are consistent across all of these environments. It executes in a sandboxed memory model, so a WASM module cannot access memory outside its own allocation without explicit permission. It is near-deterministically fast, typically executing within 10 to 20 percent of native performance for compute-heavy workloads. It is portable, meaning the same compiled binary runs on any platform that has a WASM runtime without recompilation.

What it is not: a replacement for JavaScript in general-purpose web development. The two are complementary. JavaScript handles DOM manipulation, event handling, and the orchestration layer of a web application. WASM handles the parts of your application where compute performance is the bottleneck.

How WebAssembly Compares to JavaScript at Runtime

Parsing and Compilation

JavaScript is a text format. The browser downloads it, parses it into an AST, JIT-compiles it, and optimizes it at runtime based on observed patterns. This pipeline is impressively fast for a dynamic language, but it introduces warmup overhead and unpredictable optimization cliffs when the JIT’s assumptions are invalidated.

WebAssembly arrives already compiled to a binary format that is much faster to validate and decode than parsing JavaScript text. The browser still compiles WASM to native machine code, but the input is already structured as a typed, validated bytecode, so the compilation step is significantly faster and more predictable. WASM also does not have JIT deoptimization paths because it carries explicit type information.

Execution Speed

For pure computation, WASM is consistently faster than JavaScript. The performance gap narrows when JavaScript’s JIT has time to warm up and build optimized profiles, but for cold-start execution or workloads with irregular patterns, WASM holds a clear advantage.

For tasks like image processing, video encoding, cryptography, audio analysis, physics simulation, and compression, WASM routinely delivers two to five times the throughput of equivalent JavaScript implementations. For workloads that are already I/O bound or DOM interaction heavy, the difference is marginal because the bottleneck is not in computation.

Memory Model

JavaScript uses a garbage-collected heap. The GC provides safety and convenience but introduces pause events that can cause latency spikes in latency-sensitive applications. WebAssembly uses linear memory: a flat, contiguous byte array that the module manages manually or through the memory management conventions of its source language. There is no GC by default, which means no GC pauses. For applications where predictable latency matters, this is a meaningful difference.

| Characteristic | JavaScript | WebAssembly |

|---|---|---|

| Format | Text (parsed at runtime) | Binary (pre-validated) |

| Compilation | JIT with deopt paths | Ahead-of-time, predictable |

| Memory | Garbage collected | Linear, manual or RAII |

| Cold start speed | Slower (parsing overhead) | Faster (binary decode) |

| Compute throughput | Good with JIT warmup | Consistently near-native |

| DOM access | Direct | Via JavaScript bridge |

Exploring WebAssembly for Your Product?

Where WebAssembly Delivers Real Value in 2026

Browser-Based Compute Workloads

The most mature use case for WebAssembly in the browser is offloading heavy computation that would otherwise require a round trip to the server. Image and video processing pipelines, PDF generation and manipulation, audio encoding, document parsing, and cryptographic operations are all well-established WASM use cases.

Figma’s rendering engine is a widely cited example. The vector graphics calculation that drives the canvas is written in C++ and compiled to WASM, which is why Figma can render complex designs in the browser at a frame rate that previously required a native application. Photoshop on the web uses the same approach.

In 2026, AI inference is the fastest-growing browser WASM use case. Running a quantized LLM or an image classification model client-side, without sending user data to a server, is now practical for models in the 50MB to 500MB range. Frameworks like TensorFlow.js and ONNX Runtime Web both support WASM backends with WebGPU acceleration for teams building privacy-preserving AI features.

Plugin and Extension Sandboxing

Running untrusted code safely is a persistent problem in platform engineering. WASM’s sandboxed execution model makes it a natural fit for plugin architectures where third-party code needs to run inside your application without access to the host system or other tenants’ data.

Envoy proxy uses WASM for its extension model. Each Envoy filter can be implemented as a WASM module, which means filter code written by different teams or vendors runs in isolation from the core proxy process. Cloudflare Workers and Fastly Compute use WASM as the execution environment for edge functions, giving developers near-native performance at the edge in a sandboxed multi-tenant runtime.

For teams building SaaS platforms that need to support customer-authored automation logic or custom business rules, WASM provides a safer alternative to eval-based execution or spinning up separate processes.

Cross-Language Code Sharing

One of the least discussed but highly practical benefits of WASM is the ability to share core logic written in one language across platforms written in different languages. A validation library, a pricing calculation engine, or a data transformation pipeline written in Rust can be compiled to WASM and consumed by a JavaScript web frontend, a Go backend service, and a Swift iOS application using the same binary, without maintaining separate implementations in each language.

This eliminates the class of bugs that arise from divergent implementations of the same logic across platforms, and it reduces the maintenance burden when the logic changes.

Server-Side and Edge Execution

The WASI standard extends WebAssembly beyond the browser by defining a portable interface to operating system resources. A WASM module compiled with WASI support can read files, make network connections, and access environment variables through a capability-based permission system, similar to how containers work but with a smaller attack surface and faster startup time.

WASM startup time is measured in microseconds rather than the milliseconds of container cold starts. For edge computing scenarios where requests need to be processed at geographically distributed nodes with minimal latency, this startup performance advantage is significant. Teams deploying on Cloudflare Workers, Fastly, or running Wasmtime in a Kubernetes sidecar are already benefiting from this in production.

| Use Case | WASM Advantage |

|---|---|

| Browser compute (images, PDF, crypto) | No server round trip, predictable latency |

| AI inference in browser | Privacy-preserving, offline capable |

| Plugin sandboxing | Isolated execution, no host access |

| Cross-language logic sharing | One binary, multiple platform consumers |

| Edge functions | Microsecond cold start, portable runtime |

Exploring WebAssembly for Your Product?

When WebAssembly Is Not the Right Choice

Adopting WebAssembly adds real complexity. The toolchain, the debugging story, the interoperability layer between WASM and JavaScript or your host language, and the operational knowledge required to maintain WASM modules in production all represent overhead that needs to be justified by a genuine performance or capability requirement.

DOM-Heavy or Event-Driven UI Code

WebAssembly cannot access the DOM directly. Every DOM interaction requires a JavaScript bridge call, which introduces overhead that quickly negates the performance benefit of running logic in WASM. If your bottleneck is rendering, layout, or event handling rather than computation, WASM will not help and may slow things down due to the crossing cost between the WASM module and the JavaScript environment.

React, Vue, and similar frameworks are optimized for this layer of work. Leave them in JavaScript.

Simple Business Logic

If your application is a standard CRUD product where the most expensive operation is a database query or an API call, there is no compute bottleneck that WASM addresses. Introducing it for standard request-response business logic adds build toolchain complexity, increases the cognitive load on your team, and provides no measurable benefit.

Teams Without Systems Programming Experience

The source languages that benefit most from compiling to WASM, namely Rust, C, and C++, require a level of systems programming knowledge that most web-focused teams do not have. AssemblyScript provides a TypeScript-like syntax that compiles to WASM and reduces this barrier, but it still requires a different mental model than JavaScript development. If your team does not have this expertise and the use case does not clearly justify developing it, WASM is the wrong tool.

The Toolchain in Practice

Rust to WASM

Rust has the most mature WASM compilation story in 2026. The wasm-pack tool handles compilation, optimization, and JavaScript binding generation. wasm-bindgen generates the glue code that allows Rust functions to accept and return JavaScript values cleanly. For teams already using Rust, the path from a Rust library to a consumable npm package backed by WASM is well documented and straightforward.

C and C++ to WASM

Emscripten is the standard toolchain for compiling C and C++ to WASM. It handles the translation of system calls to browser-compatible equivalents and supports porting large codebases. This is the path used by projects like SQLite compiled to WASM, which is now deployed in several production web applications as a fully client-side relational database.

AssemblyScript

AssemblyScript uses a strict subset of TypeScript syntax and compiles directly to WASM. It is the lowest-friction entry point for JavaScript developers who want to experiment with WASM without learning a new language. The performance ceiling is lower than Rust or C, but for many use cases it is sufficient and the developer experience is significantly better.

| Source Language | Toolchain | Best Suited For |

|---|---|---|

| Rust | wasm-pack, wasm-bindgen | Performance-critical new modules |

| C / C++ | Emscripten | Porting existing native codebases |

| AssemblyScript | asc compiler | JS developers, moderate compute needs |

| Go | TinyGo | Smaller binaries, simpler use cases |

Debugging and Observability

Debugging WASM in production is harder than debugging JavaScript. Source maps exist and modern browser DevTools support stepping through WASM with source-level debugging when the source map is available, but the experience is less polished than JavaScript debugging.

For server-side WASM, the Wasmtime and WasmEdge runtimes both provide logging and tracing hooks. Integration with OpenTelemetry for distributed tracing is available but requires explicit instrumentation in the WASM module itself, since the runtime cannot automatically inject trace context the way a framework middleware can.

Treat WASM modules as you would a native library dependency: write thorough unit tests at the WASM layer, test the JavaScript-to-WASM interface boundary explicitly, and instrument key execution paths before deploying. Post-deployment debugging in WASM is substantially more expensive than in interpreted languages.

For teams building observable systems, the approach to distributed tracing discussed in our article on building observable systems with metrics, logs, and traces applies equally to WASM-backed services, with the additional consideration that trace propagation must be explicitly wired through the WASM module boundary.

The WASM Component Model and the Road Ahead

The WebAssembly Component Model is the most significant architectural development in the WASM ecosystem in 2026. It standardises how WASM modules expose and consume interfaces, enabling language-agnostic composition where a Rust module, a Go module, and a JavaScript module can call each other through a common interface definition language called WIT (WASM Interface Types).

This addresses one of the main friction points in adopting WASM: the challenge of integrating modules written in different languages without hand-writing binding code for each combination. As the Component Model stabilises and toolchain support matures, it will make WASM-based plugin architectures and cross-language sharing significantly more practical to implement and maintain.

The official specification and tooling for the Component Model is maintained by the Bytecode Alliance. Teams evaluating WASM for plugin systems or multi-language architectures should follow the progress at bytecodealliance.org, which publishes the current state of the Component Model specification and the toolchain roadmap.

Making the Decision

WebAssembly earns its place in your architecture when you have a specific, measurable performance problem in a compute-heavy workload, when you need sandboxed execution of untrusted code, when you need to share logic written in a systems language across multiple platform targets, or when you are building for edge environments where cold start time is critical.

It does not earn its place when your application’s bottlenecks are I/O, database, or network rather than computation. It does not earn its place when your team lacks the systems programming knowledge to maintain the source language effectively. And it does not earn its place when the complexity of the JavaScript-to-WASM interoperability layer outweighs the performance gain being sought.

The teams getting the most out of WebAssembly in 2026 are the ones who have identified a specific constraint, validated that WASM addresses it, and invested in learning the toolchain properly rather than treating it as a general-purpose performance upgrade for their entire stack.

Exploring WebAssembly for Your Product?

Most popular pages

From Prototype to Production: The Engineering Checklist That Actually Matters

Prototypes lie. They perform well in demos because they are not doing any of the work that production systems actually do. There is no...

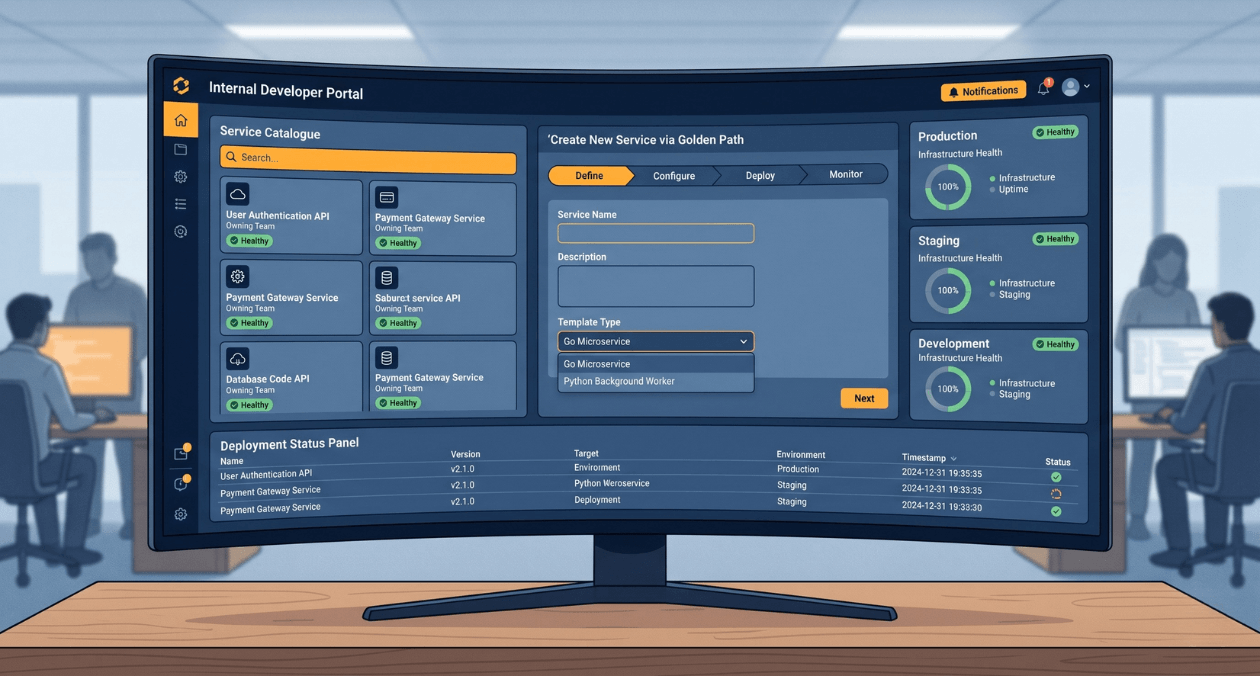

Building a Developer Experience (DX) Platform: From Golden Paths to Self-Service Infrastructure

There is a measurement problem at the heart of platform engineering. The people who benefit most from a well-built internal developer platform are often...

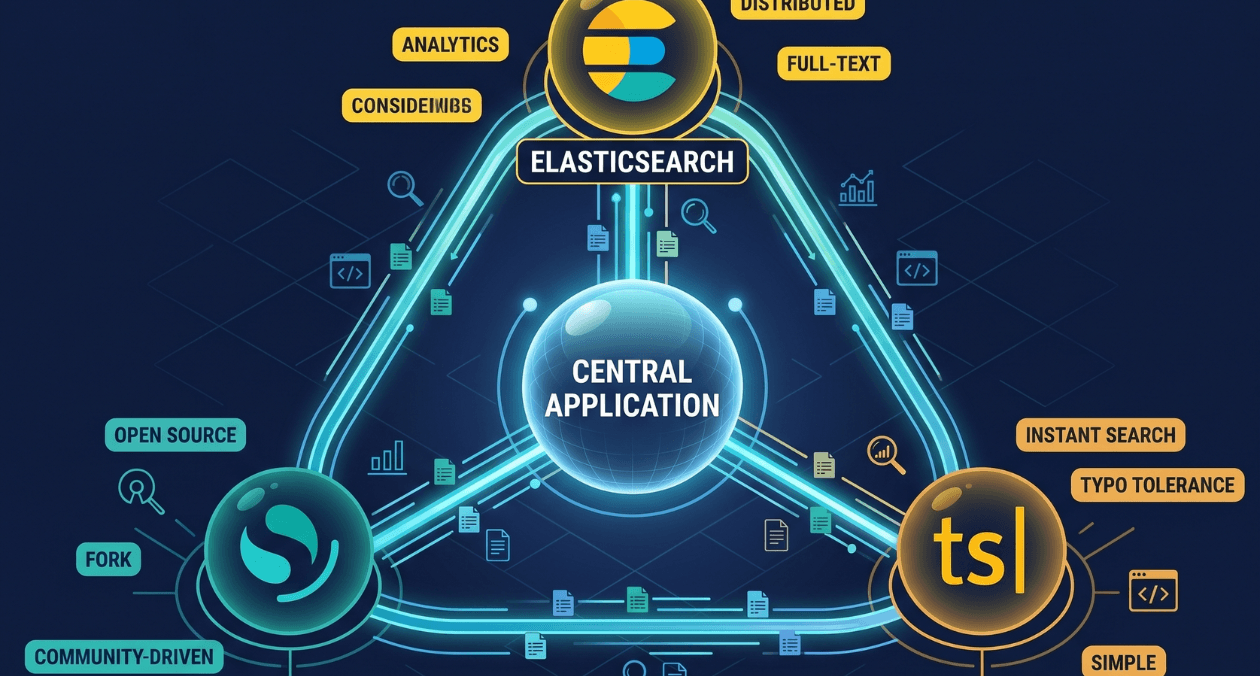

Search Infrastructure for Applications: Elasticsearch vs OpenSearch vs Typesense

Search is one of those features that seems straightforward until you try to build it properly. A basic LIKE query handles small datasets. The...